For decades, the pursuit of granular consumer insights has been a cornerstone of strategic business decision-making, yet it has invariably presented a formidable dilemma: the trade-off between depth, cost, and speed. Uncovering the nuanced preferences, behaviors, and motivations of target audiences traditionally demanded significant financial investment, often ranging into the tens or even hundreds of thousands of dollars, alongside a time commitment spanning several months. This protracted timeline frequently meant that by the time critical insights were distilled and delivered, market conditions might have subtly, or even dramatically, shifted, rendering the findings partially or wholly obsolete. However, a profound transformation is now underway, with generative artificial intelligence (AI), particularly large language models (LLMs), fundamentally reshaping this calculus, promising to compress research timelines from months to mere days and injecting unprecedented agility into the $153 billion global insights industry.

Historically, the marketing research pipeline has been a multi-stage, labor-intensive process. It typically commences with a precise problem definition, followed by meticulous research and study design, which includes sample selection and instrument development. The subsequent data collection phase, whether through qualitative methods like interviews and focus groups or quantitative approaches such as surveys, is often the most resource-intensive. This is then followed by rigorous data analysis and, finally, the synthesis and delivery of actionable insights. Each stage, reliant on human expertise and coordination, contributes to the overall duration and expense. For instance, a comprehensive global survey involving multiple markets could easily take six to eight months, from conceptualization to final report, with costs escalating rapidly based on sample size, geographic spread, and data collection methodologies. Qualitative studies, while offering rich detail, are inherently limited in scale due to the intensive nature of human moderation and analysis.

Generative AI is proving to be a powerful catalyst in dismantling these traditional bottlenecks, ushering in an era where speed and cost-efficiency no longer necessitate a compromise on quality or depth. Much like AI-driven drug discovery has dramatically shortened the journey from candidate screening to clinical-trial readiness in pharmaceuticals, LLMs are similarly accelerating the progression from initial market exploration to actionable insights in consumer intelligence. This integration of AI into the market research process, critically, maintains a "human in the loop" approach, where strategic oversight and problem definition remain firmly within the domain of human decision-makers, while AI augments and automates the more laborious, data-intensive stages.

One of the most revolutionary applications of LLMs in market research is the emergence of "synthetic consumers" or "digital twins." These are sophisticated AI models, meticulously trained on vast datasets of human behavior, demographic information, psychographic profiles, and historical consumer responses, enabling them to mimic the characteristics and decision-making patterns of specific target demographics. Researchers can construct entire virtual populations, representing diverse segments from Gen Z urbanites to affluent retirees in rural areas. Instead of recruiting, incentivizing, and conducting real-world trials with human participants, which can be slow and expensive, companies can now present new product concepts, advertising campaigns, pricing models, or user interface designs to these synthetic twins. The AI models can then generate realistic feedback, simulate purchasing decisions, and even articulate nuanced opinions, all within a fraction of the time and cost. This capability allows for rapid, iterative concept testing, A/B testing of marketing messages, and the exploration of myriad scenarios without the logistical complexities associated with human panels. While the representativeness and potential biases of these synthetic populations remain a crucial area of validation, pioneering academic work highlights the potential for LLMs to effectively simulate human samples, offering a powerful tool for early-stage ideation and validation.

Beyond simulation, LLMs are transforming qualitative research, enabling it to be conducted at unprecedented scale. Traditional qualitative methods, such as one-on-one interviews and focus groups, are invaluable for uncovering rich, nuanced insights but are inherently limited by the number of participants and the capacity of human moderators and analysts. Generative AI is now empowering "AI-moderated interviews," where sophisticated bots can conduct structured or semi-structured conversations with a large number of respondents. These AI moderators can ask open-ended questions, follow up with probing inquiries based on previous responses, and adapt the conversation flow dynamically. The benefits are manifold: geographical barriers are eliminated, allowing for diverse global participation; human moderator bias, conscious or unconscious, is significantly reduced; and the sheer volume of qualitative data that can be collected, transcribed, and analyzed becomes exponentially larger. LLMs can then process these vast datasets, identifying recurring themes, sentiment trends, and latent insights that might be missed by human analysts sifting through hundreds or thousands of pages of transcripts. This capability is particularly impactful for multinational corporations seeking to understand cultural nuances and preferences across disparate markets efficiently.

Furthermore, the inherent strength of LLMs in processing and understanding unstructured data is proving transformative. A significant portion of consumer insight resides not in neatly organized survey responses but in free-form text, audio, and even video. Social media comments, online product reviews, customer service call transcripts, forum discussions, and open-ended survey responses represent a treasure trove of authentic, unsolicited feedback. Prior to advanced AI, extracting meaningful, scalable insights from this deluge of unstructured data was a monumental task. LLMs, with their ability to comprehend context, infer sentiment, identify entities, and summarize complex information, can now rapidly analyze these diverse data sources. They can pinpoint emerging trends, identify pain points, gauge brand perception, and even conduct sophisticated competitive intelligence by monitoring public discourse surrounding rivals. This deep dive into the ‘voice of the customer’ provides a holistic understanding that quantitative data alone often cannot capture, enabling businesses to react swiftly to market shifts and customer feedback.

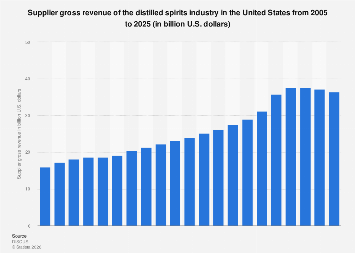

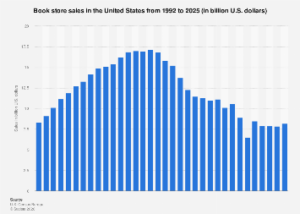

The economic implications of these advancements are profound. The global market research industry, valued at over $150 billion, is on the cusp of significant restructuring. Companies that strategically integrate generative AI into their insight generation processes stand to gain a considerable competitive advantage. Faster access to deeper insights translates directly into accelerated product development cycles, more finely tuned marketing campaigns, optimized pricing strategies, and ultimately, enhanced market responsiveness and profitability. This technological leap also democratizes sophisticated research capabilities. Smaller businesses and startups, previously constrained by budget and time, can now leverage AI tools to conduct high-quality market research that was once the exclusive domain of large enterprises with substantial research departments or budgets for expensive agencies. This shift fosters greater innovation across the business landscape.

The evolution of the workforce within this industry is also inevitable. Rather than displacing human expertise entirely, AI is reconfiguring roles. The "human in the loop" becomes paramount for higher-level strategic functions: defining the core research problem, designing the overarching methodology, interpreting the nuanced outputs from AI, validating synthetic data against real-world observations, and, critically, ensuring ethical considerations are addressed. The shift moves human professionals away from laborious data collection and rudimentary analysis towards more complex problem-solving, creative insight generation, and strategic application of AI-derived intelligence. This necessitates a new skill set for marketing and research professionals, emphasizing AI literacy, data science fundamentals, and critical thinking.

However, the rapid adoption of generative AI in market research is not without its challenges and ethical considerations. A primary concern is the potential for bias. If LLMs are trained on historical data that reflects societal biases or skewed demographic representation, the insights generated by synthetic consumers or AI-moderated interviews could inadvertently perpetuate or amplify these biases, leading to flawed strategies and potentially exclusionary products or campaigns. Accuracy and the phenomenon of "hallucinations"—where AI generates plausible but entirely fabricated information—also demand robust validation mechanisms. Data privacy and security become even more critical as vast amounts of consumer data, real and synthetic, are processed by AI systems. Furthermore, transparency and explainability in AI’s decision-making process are vital for trust and accountability. Over-reliance on synthetic data without adequate real-world validation could lead to an echo chamber effect, divorcing insights from authentic consumer realities.

Looking ahead, the integration of generative AI is poised to become an indispensable component of the consumer intelligence landscape. The future will likely see increasingly sophisticated hybrid models, where AI and human expertise seamlessly collaborate, each augmenting the other’s strengths. Predictive analytics powered by LLMs will grow more accurate, anticipating market shifts and consumer needs with greater precision. As AI tools become more intuitive and accessible, they will foster a culture of continuous experimentation and learning within organizations. Ultimately, generative AI is not merely a tool for efficiency; it represents a fundamental re-imagining of how businesses understand, engage with, and strategically respond to their consumers, enabling a level of agility and insight previously unattainable, and ensuring that strategic decisions are grounded in the most current and comprehensive understanding of the market.