In the early morning hours of 2018, the world witnessed a stark illustration of artificial intelligence’s complex ethical and accountability dilemmas when a self-driving Uber test vehicle tragically struck and killed a pedestrian in Tempe, Arizona. The immediate aftermath was a maelstrom of questions: Was the safety driver, who was reportedly distracted, solely at fault? Did the engineers who programmed the vehicle’s object detection and emergency braking systems bear the primary responsibility? What about Uber’s executive leadership, or the regulatory bodies that permitted autonomous vehicle testing on public roads? The profound inability to pinpoint a single culprit in this incident underscored a fundamental shift in how responsibility must be understood and attributed as intelligent technologies increasingly permeate organizational decision-making.

As enterprises across the globe integrate increasingly sophisticated autonomous systems – from high-frequency trading bots and algorithmic credit assessment tools to AI-powered medical diagnostics and supply chain optimizers – the traditional, linear models of accountability are proving insufficient. Decisions, once a clear outcome of human intent and action, now emerge from a intricate dance between human operators, complex algorithms, vast datasets, and dynamic operational environments. This distributed agency introduces new layers of influence, opacity, and unpredictability, fundamentally challenging the notion that a single individual can be held solely accountable when things go awry. For today’s leaders, the imperative is no longer a futile search for a solitary scapegoat but rather the construction of a shared narrative – a comprehensive understanding of how collective activities, underlying assumptions, technological designs, and cultural contexts collectively shaped an outcome. This collaborative revisiting of decision pathways, termed "narrative responsibility," is becoming indispensable for fostering organizational learning, enhancing resilience, and navigating the inherent complexities of AI-enabled operations.

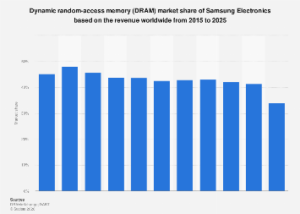

The global artificial intelligence market, projected by some analysts to exceed $1.8 trillion by 2030, reflects a profound technological transformation reshaping industries from finance to healthcare, logistics to human resources. AI systems are no longer merely tools but active participants in critical decision processes. Algorithmic trading platforms execute millions of transactions per second, often without direct human oversight. AI-powered diagnostic tools assist medical professionals in identifying diseases with unprecedented accuracy. Predictive maintenance systems anticipate equipment failures in manufacturing. While these advancements promise unparalleled efficiencies, speed, and analytical depth, they simultaneously usher in a new era of systemic risk. The "black box" problem, where even developers struggle to fully explain an AI’s decision-making logic, coupled with emergent behaviors in complex adaptive systems, makes it exceedingly difficult to trace cause and effect along a simple linear path.

Classic theories of responsibility, largely forged in the industrial age, operate on three core assumptions: first, that events unfold in a fundamentally linear fashion, adhering to clear cause-and-effect principles; second, that decision-makers operate within a shared, traceable space and time, allowing for direct links between actions and consequences; and third, that responsibility can be precisely attributed backward to an individual whose intentions and choices directly drive outcomes. These assumptions underpinned organizational structures where a hierarchy of command and control ensured clear lines of authority and accountability. When a manufacturing defect occurred, a specific engineer or production manager could be identified. When a financial loss materialized, a particular trader or portfolio manager might be held responsible.

However, the advent of AI shatters these foundational premises. The "decision" in an AI system is often a culmination of design choices by multiple teams, training data collected over years, operational parameters set by different departments, and the dynamic interaction of the algorithm with its environment. This distributed agency means that a failure is rarely the fault of one person or even one component, but rather an emergent property of the entire socio-technical system. Organizations often default to traditional models by holding a senior leader personally responsible, as seen after the fatal crashes of Boeing’s 737 MAX aircraft in 2018 and 2019, which led to the dismissal of CEO Dennis Muilenburg. While such actions might provide a visible response to public pressure, they frequently fail to address the deeper, systemic issues related to design flaws, certification processes, organizational culture, or the intricate human-machine interfaces that contributed to the tragedy. This "culprit culture" often stifles genuine learning, fosters a climate of fear, and prevents the necessary systemic changes required for future resilience.

This is where the paradigm of narrative responsibility offers a crucial alternative. Instead of focusing on identifying a single guilty party, it advocates for a collaborative, comprehensive inquiry into the entire sequence of events, influences, and interactions that led to an outcome. It involves constructing a detailed "story" of the failure, meticulously mapping the contributions of human actors, algorithmic logic, data inputs, environmental variables, and organizational processes. This framework encourages a shift from punitive blame to collective understanding and learning. By distributing ownership of the outcome across the various human and artificial intelligences involved, organizations can move beyond superficial fixes to address root causes embedded within their systems and cultures. This approach is not about absolving individuals of their duties but about recognizing that in complex AI systems, responsibility is inherently shared and diffused.

Operationalizing narrative responsibility requires a fundamental shift in organizational culture and practice. Firstly, it necessitates robust, multi-disciplinary post-incident analysis frameworks that go beyond simple root cause identification. These frameworks must engage AI developers, data scientists, ethicists, legal experts, business operations personnel, and domain specialists to reconstruct the event holistically. The aviation industry’s long-standing safety culture, which prioritizes learning from incidents over blaming individuals, offers a valuable blueprint, though adapted for the unique complexities of AI. Secondly, it demands greater transparency and explainability (XAI) from AI systems themselves. Organizations must strive to document AI design choices, data provenance, training methodologies, and decision-making logic in ways that are interpretable by humans. This allows for a clearer understanding of how and why an AI system arrived at a particular decision, even if the underlying computations remain complex.

Furthermore, embedding narrative responsibility means integrating AI-specific risks into enterprise-wide risk management frameworks. This includes proactive measures such as "red teaming" – where adversarial teams attempt to find vulnerabilities in AI systems – and pre-mortem exercises to anticipate potential failure modes before deployment. Ethical AI governance structures, including internal committees, clear codes of conduct, and continuous employee training on AI’s capabilities and limitations, are also critical. Leaders must cultivate an environment of psychological safety where individuals feel empowered to report errors or concerns without fear of reprisal, knowing that the organization’s primary goal is systemic improvement.

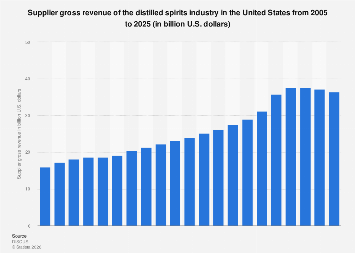

The economic and societal implications of embracing narrative responsibility are profound. Globally, regulatory bodies are grappling with the challenge of governing AI. The European Union’s AI Act, for instance, represents a landmark effort to classify AI systems by risk level and impose stringent requirements for transparency, human oversight, and accountability. Other nations, including the United States and China, are also developing their own regulatory frameworks. Organizations that proactively adopt narrative responsibility principles will not only enhance their compliance posture but also gain a significant competitive advantage. Building trust in AI systems – among consumers, employees, and regulators – is paramount for widespread adoption and innovation. Companies with a demonstrable commitment to responsible AI practices will attract top talent, secure investment, and ultimately capture greater market share. Conversely, the economic costs of AI failures, ranging from reputational damage and regulatory fines to costly legal battles and loss of public trust, can be immense.

Ultimately, the proliferation of AI is not merely a technological trend but a fundamental redefinition of how organizations operate, make decisions, and manage risk. The challenge is not to halt the advancement of AI but to evolve our management and governance frameworks to keep pace with its transformative power. By moving beyond the archaic impulse to assign blame and instead cultivating a culture of narrative responsibility, leaders can foster resilience, accelerate learning, and ensure that AI serves as a force for progress, safely and ethically, for enterprises and society at large. This new approach to accountability is not just a moral imperative; it is an economic necessity for thriving in the age of intelligent machines.