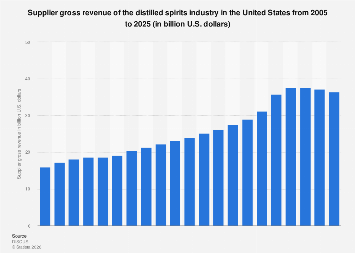

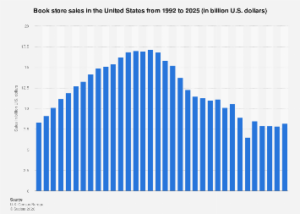

The traditional paradigm of market research, often characterized by its protracted timelines and substantial financial outlays, is undergoing a profound transformation driven by the advent of generative artificial intelligence. For decades, organizations have grappled with the dilemma of needing deep consumer insights to inform strategic decisions, only to find that the data gathering and analysis process could span months, by which time market dynamics or competitive landscapes might have irrevocably shifted. This inherent friction between the need for speed and the demands of rigorous research has long presented a significant barrier to agile decision-making. However, the emergence of large language models (LLMs) is fundamentally altering this calculus, compressing research timelines from months to mere days and redefining the capabilities of the global market insights sector, estimated to be worth $153 billion in 2025.

Central to this paradigm shift are several innovative applications of generative AI that streamline and enhance every stage of the research pipeline. Historically, a typical market research project, encompassing problem definition, study design, sample selection, data collection, analysis, and insights delivery, has been a labor-intensive endeavor. Whether qualitative (like interviews and focus groups) or quantitative (surveys), these studies have commanded budgets ranging from tens to hundreds of thousands of dollars. Generative AI is now making the entire process not only more efficient and cost-effective but also enabling novel approaches to insight generation that were previously unfeasible, mirroring the acceleration seen in fields like AI-driven drug discovery, where candidate screening to clinical trials has been significantly expedited.

One of the most disruptive innovations is the deployment of synthetic consumer "digital twins." These AI-powered entities are meticulously engineered to embody specific demographic, psychographic, and behavioral profiles, acting as virtual respondents for rapid concept testing and market simulation. By training LLMs on vast datasets of consumer preferences, historical purchasing behaviors, and demographic information, researchers can create digital personas that realistically respond to product concepts, marketing messages, or pricing strategies. This capability allows businesses to test an almost infinite array of scenarios and iterations instantly, sidestepping the logistical complexities and costs associated with recruiting and surveying human participants. For instance, a consumer packaged goods company could virtually test hundreds of packaging designs or advertising taglines across diverse simulated consumer segments in a single afternoon, generating immediate feedback on potential appeal and perceived value. While these synthetic respondents offer unparalleled speed and scalability, a critical consideration remains the potential for generating "average" or probable responses, which might overlook the anomalous yet deeply insightful outliers that often drive true innovation in qualitative research. The challenge lies in designing these digital twins to surface unexpected reactions rather than merely confirming existing hypotheses.

Beyond synthetic respondents, LLMs are revolutionizing qualitative research through AI-moderated interviews at scale. Traditionally, in-depth interviews and focus groups were limited by the availability of skilled human moderators, geographical constraints, and the sheer time required for transcription and manual analysis. Generative AI now enables the orchestration of hundreds, even thousands, of qualitative interviews simultaneously. An AI moderator, powered by an LLM, can engage respondents in natural language conversations, following a dynamic discussion guide, asking clarifying questions, and probing deeper into specific responses. This not only democratizes access to rich qualitative data for businesses of all sizes but also ensures a degree of moderation consistency that is difficult to achieve with human interviewers. However, the efficacy of AI moderation hinges on sophisticated architectural design. Simple LLM prompting can lead to issues like "lost in the middle," where the model’s memory of earlier conversation points degrades over extended interactions, or a failure to adhere strictly to moderation protocols. Advanced implementations require multi-agent systems, where a "guider" agent manages the research objectives and probing strategy, while a separate "communicator" agent handles conversational phrasing, ensuring both methodological discipline and natural dialogue flow.

Furthermore, the analytical prowess of LLMs is transforming the ability to harness unstructured data. A significant portion of consumer sentiment and market feedback exists in forms that are difficult for traditional analytical tools to process: social media posts, customer reviews, call center transcripts, open-ended survey responses, and online forum discussions. LLMs excel at ingesting and comprehending vast volumes of such textual data, identifying nuanced themes, sentiment shifts, emerging trends, and implicit needs with unprecedented speed and accuracy. This capability allows businesses to move beyond surface-level metrics to truly understand the underlying motivations and emotional drivers behind consumer behavior. A global brand, for example, can analyze millions of customer comments across different linguistic regions to detect subtle shifts in product perception or anticipate competitive threats, delivering actionable insights in real-time that would have taken months for human analysts to unearth.

The combined impact of these innovations is a dramatic compression of research timelines and a significant reduction in operational costs. What once required a multi-month project involving extensive fieldwork, data entry, and manual analysis can now be completed in a matter of days. This agility allows companies to conduct more frequent testing and experimentation, adopting an iterative approach to product development and marketing campaigns. The economic implications are profound: smaller research teams can manage much larger and more complex studies without compromising quality, thereby empowering start-ups and small and medium-sized enterprises (SMEs) to access insights previously available only to large corporations. This democratization of high-quality market intelligence fosters greater innovation and responsiveness across the business landscape, potentially leveling the playing field for emerging players.

However, this technological leap necessitates a recalibration of the role of the human researcher. No longer primarily data collectors or manual analysts, researchers are evolving into architects and strategic interpreters. Their focus shifts towards designing robust AI systems, crafting effective prompts for LLMs, meticulously validating AI-generated insights, and, critically, acting as ethical guardians against inherent biases within the models or data. The human in the loop becomes paramount for ensuring that AI outputs are not merely plausible but genuinely insightful and reflective of complex human realities. This demands a keen understanding of both the research question and the capabilities and limitations of AI. Moreover, to mitigate the risk of confirmation bias, where an AI system might inadvertently reinforce the researcher’s own assumptions, an adversarial separation of duties within research teams is becoming increasingly vital. For instance, the individual designing the synthetic respondents or programming the AI moderator should ideally not be the sole evaluator of the generated output.

Looking ahead, the integration of generative AI into market research is poised to accelerate further, driving the growth of specialized AI tools and platforms within the insights industry. While the immediate benefits of speed and cost efficiency are undeniable, the long-term impact will be measured by the depth and originality of the insights generated and the ethical frameworks put in place to govern AI’s application. Challenges such as data privacy, regulatory compliance, the continuous need for human expertise, and the careful management of algorithmic bias will remain critical areas of focus. As businesses increasingly operate in a rapidly evolving global marketplace, the ability to derive timely, accurate, and nuanced consumer insights will be a decisive competitive advantage. Thoughtful implementation, balancing technological prowess with human oversight and ethical considerations, will be key to unlocking the full potential of this transformative era in market intelligence.