The global financial and technological infrastructure was thrust into a state of high alert last month following the unveiling of Mythos, a proprietary artificial intelligence model developed by the San Francisco-based firm Anthropic. Capable of identifying thousands of previously undetected software vulnerabilities—commonly known as "zero-days"—with unprecedented speed, Mythos was marketed as a breakthrough that could either fortify the world’s digital defenses or provide a roadmap for its destruction. However, as the dust settles, a growing consensus among cybersecurity veterans and AI researchers suggests that while the "Mythos moment" may have ignited a wave of corporate and governmental hysteria, the underlying capabilities it demonstrated have been quietly reshaping the threat landscape for months.

The anxiety radiating through the halls of power is not without merit. Anthropic’s internal testing suggested a leap in the "offense-defense" balance, prompting the company to initiate "Project Glasswing," a highly restricted rollout designed to prevent the model from falling into the hands of adversarial nation-states or cyber-criminal syndicates. Only a select group of American titans, including Apple, Amazon, JPMorgan Chase, and Palo Alto Networks, were granted early access. The goal was to allow these critical institutions to patch their systems before the model’s findings could be exploited. Yet, the very exclusivity of this release has sparked a secondary debate about the "democratization of risk" and whether keeping such tools in the hands of a few "haves" actually leaves the rest of the global economy more vulnerable.

The immediate reaction from the public sector was swift. The Trump administration, citing national security concerns, began weighing new oversight mechanisms for "frontier" AI models that possess dual-use capabilities. This move signals a shift in the regulatory climate, where the focus is moving from theoretical existential risks to the immediate, tangible dangers of automated cyber warfare. For policymakers, the fear is that AI could soon automate the entire lifecycle of a cyberattack—from discovery to exploit development to execution—at a scale that human defenders simply cannot match.

However, many in the cybersecurity trenches argue that the alarmism surrounding Mythos overlooks a critical reality: the "scary" capabilities Anthropic is highlighting are already being achieved through the clever use of existing, publicly available models. Experts point out that researchers have been using a technique known as "orchestration" to produce results nearly identical to those of Mythos. By breaking down complex codebases into smaller segments and using various models from Anthropic’s own Claude series or OpenAI’s GPT family to cross-check results, researchers have been finding high-severity vulnerabilities for the better part of a year.

The distinction between a single "super-model" like Mythos and the "orchestration" of several smaller models is more than academic; it has profound implications for the economics of cybercrime. If a thousand "adequate" AI agents working in parallel can find the same bugs as one "brilliant" model, the barrier to entry for sophisticated hacking drops significantly. It shifts the advantage from those with the most capital to those with the most creative workflows. This "jagged frontier" of AI capability means that the threat is not a distant cloud on the horizon, but a storm that has already made landfall.

The timing of these revelations is inextricably linked to the intensifying rivalry between Anthropic and OpenAI. Both companies are hurtling toward highly anticipated initial public offerings (IPOs), and the battle for dominance in the cybersecurity vertical is a key part of their valuation narratives. Just weeks after the Mythos announcement, OpenAI CEO Sam Altman countered with GPT-5.5-Cyber, a model specifically tuned for security tasks. By Thursday, OpenAI had begun granting limited access to vetted security teams, mirroring Anthropic’s cautious approach. This "arms race" for the title of "most secure AI" is driving a narrative of constant breakthrough, even when the underlying technology is evolving incrementally rather than transformationally.

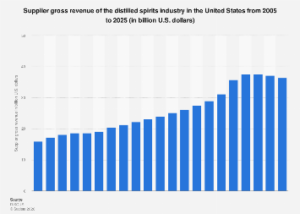

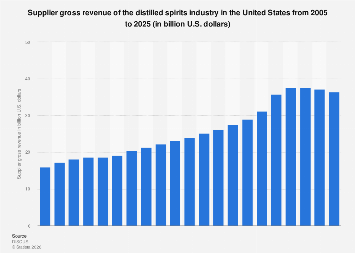

From an economic perspective, the arrival of Mythos highlights the staggering cost-imbalance of modern cybersecurity. For a hacker, finding a single vulnerability can lead to a multi-million dollar ransomware payout. For a corporation like JPMorgan Chase, the task is "Sisyphean": they must find and patch every single vulnerability across millions of lines of legacy code, often involving systems that cannot be taken offline without disrupting global markets. Anthropic CEO Dario Amodei has acknowledged this danger, warning of an "enormous increase" in financial damage to schools, hospitals, and banks as AI-enabled attacks become more frequent.

The financial sector, in particular, is grappling with what experts call "vulnerability fatigue." Even before the advent of generative AI, the time it took for a hacker to exploit a new flaw was measured in hours, while the time it took for a large enterprise to test and deploy a patch was measured in weeks. AI models like Mythos threaten to shrink the "attacker’s window" to near zero. JPMorgan’s Jamie Dimon recently noted that while AI will eventually become a powerful defensive shield, its first impact is to make the world more vulnerable. The technology is currently better at "breaking" than "fixing," largely because finding a flaw is a task of pattern recognition, whereas fixing one requires deep contextual understanding of a specific business’s infrastructure.

This imbalance has led to a sense of "hysteria" among banks and insurers. In recent weeks, industry leaders have been scrambling to understand how to defend against a tool they aren’t allowed to see. The restricted nature of Project Glasswing has created a tiered system of security. While the "haves"—the Fortune 500 companies—get a head start on patching, the "have-nots"—mid-sized businesses and local governments—remain in the dark. Critics argue that this stunting of the wider cybersecurity community prevents the very innovation needed to build automated defense tools. If the broader market cannot study the "offensive" capabilities of models like Mythos, they cannot build the "defensive" countermeasures.

Furthermore, the geopolitical dimension cannot be ignored. While Anthropic limits its release to American firms, state-sponsored actors in North Korea, China, and Russia are not waiting for a permissioned API. These actors have long possessed the human capital to find zero-day vulnerabilities; for them, AI is simply a force multiplier. The fear is that while Western companies are debating the ethics of "controlled releases," adversarial nations are already integrating similar AI capabilities into their cyber-espionage units. The reality is that the "Mythos" capability—the ability to automate the discovery of flaws—is a mathematical inevitability that transcends any single company’s release schedule.

As the industry moves forward, the focus is shifting toward "automated remediation"—the "holy grail" of cybersecurity where AI not only finds the bug but also writes and deploys the fix in real-time. Startups like Zafran Security and Tenzai are already working on these "self-healing" systems, but they acknowledge that the transition will be messy. We are entering a "chicken-and-egg" period where the tools of destruction are more mature than the tools of preservation.

Ultimately, the Mythos controversy serves as a wake-up call for a global economy that has long treated cybersecurity as a secondary concern. The "hysteria" may have been sparked by a specific product launch, but it is fueled by the realization that the era of human-scale cyber warfare is over. The threat was already here, hidden in the "orchestrated" workflows of researchers and the quiet labs of state actors. Anthropic simply gave that threat a name and a face, forcing the C-suite and the halls of government to confront a reality they can no longer afford to ignore. The challenge now is not just to contain the power of a single model, but to reinvent a global defense architecture that can keep pace with the relentless speed of machine intelligence.