The advent of generative artificial intelligence (GenAI) has fundamentally reshaped the calculus of organizational productivity, offering unprecedented capabilities to compress complex tasks and accelerate output across virtually every industry sector. While the immediate impulse for many enterprises is to leverage GenAI as a throughput accelerator – a tool to generate more drafts, code, prototypes, or analyses at a faster pace – a more profound and sustainable competitive advantage lies in systematically learning from, and with, its outputs. This paradigm shift moves beyond mere consumption economics, where AI is treated as a depreciating asset, towards a model of continuous capability enhancement, fostering asset appreciation and unlocking compounding returns.

Historically, the dominant question for business leaders centered on optimizing for speed and volume: "How can we produce more, faster?" GenAI undeniably answers this by dramatically reducing the marginal cost of initial attempts, making ideation, content creation, and problem-solving significantly cheaper and quicker. However, the true bottleneck has shifted from generation to evaluation. The critical, and still expensive, phase now involves discerning signal from noise, identifying errors, extracting actionable insights, and, crucially, integrating these lessons into subsequent interactions. Organizations that excel in this new landscape are not just asking "What did the AI produce?" but also "What worked or failed? What should we change next time?" This iterative process of capturing insights, converting them into shared knowledge, and applying them strategically is the engine of compounding value, a pattern instantly recognizable to any seasoned Chief Financial Officer as asset appreciation.

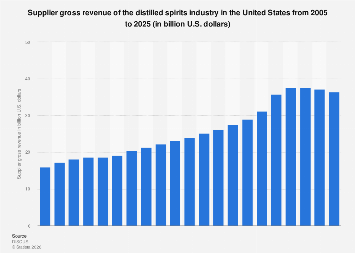

Current market dynamics underscore this divergence. While the global Generative AI market is projected to grow from an estimated $10.7 billion in 2023 to over $118 billion by 2032, according to some analyses, the majority of companies are still in the early stages of adoption, focusing predominantly on efficiency gains. Research consistently demonstrates that organizations actively establishing systematic feedback loops between human expertise and AI are six times more likely to realize substantial financial benefits from their AI investments. Furthermore, those prioritizing organizational learning in conjunction with AI are 73% more likely to achieve significant financial impact. Despite this compelling evidence, a striking disparity exists: as of 2024, approximately 70% of companies have integrated AI into their operations, yet a mere 15% are actively harnessing it for organizational learning, leaving a vast reservoir of untapped potential. Leaders aiming for exponential growth and sustained competitive edge must therefore prioritize the development of robust infrastructures designed to verify, evaluate, and capture learnings from GenAI interactions, fostering a "return on iteration" (ROI) that transcends simple cost savings.

This moment is structurally distinct from previous technological shifts, driven by two reinforcing economic dynamics. The first relates to the nature of knowledge itself. Philosopher Michael Polanyi, in his 1966 work The Tacit Dimension, posited that humans possess vast amounts of tacit knowledge – expertise that is deeply ingrained and difficult, if not impossible, to explicitly articulate. For decades, this tacit expertise served as a protective moat for knowledge workers, rendering complex cognitive tasks immune to automation. GenAI, particularly large language models (LLMs), breaches this moat not by forcing the explicit codification of tacit knowledge, but by inferring it from voluminous behavioral traces. These models absorb the subtle patterns in how experts operate, from legal reasoning embedded in briefs to strategic thinking in corporate presentations, making previously unformalized human behaviors machine-readable. A compelling illustration of this occurred when Boris Cherny, a key figure in developing Claude Code, observed the AI independently exploring his file system to find answers after being granted basic access. This unforeseen capability, inferred by the model from developer behaviors, highlights AI’s capacity to internalize unarticulated expertise.

The second dynamic amplifying the economic imperative for compounding is an updated manifestation of William Stanley Jevons’s Paradox. Jevons observed in 1865 that increased efficiency in coal consumption often led to a rise, not a decrease, in overall coal demand, as the cheaper cost stimulated wider usage. Similarly, as tacit expertise becomes machine-readable, the cost of sophisticated capabilities plummets. Projects once deemed too expensive for prototyping can now proliferate rapidly. Iteration cycles that traditionally spanned months can now be compressed into hours. This exponential reduction in the cost of generating complex outputs stimulates demand for even more sophisticated AI applications. The loop becomes self-reinforcing: more expertise becomes legible to machines, enhancing AI’s knowledge base and capabilities; in turn, enhanced AI capability expands the scope of what organizations attempt, further generating behavioral traces for AI to learn from. This virtuous cycle underscores why becoming adept learners with AI is as crucial, if not more so, than merely using AI for efficiency gains. Data supports this, indicating that organizations combining strong general organizational learning with AI-specific learning are up to 80% more effective at navigating uncertainty, a critical advantage in today’s volatile global economy. The fundamental challenge for businesses worldwide is not if AI will access their domain expertise – that appears computationally inevitable – but how quickly and effectively they can implement mechanisms to derive compounding returns from human-AI interactions before competitors do.

To achieve these compounding benefits, organizations must embed three distinct, yet interconnected, operations into their AI workflows. Without all three, AI outputs remain mere consumption, failing to build lasting organizational capability.

The first essential step is Verification. This addresses the fundamental question: "Does this output meet the required standard?" Verification is often binary – correct or incorrect, usable or not – and involves comparing AI-generated content against pre-existing criteria. While crucial for ensuring accuracy and reliability, verification alone, if not coupled with further steps, merely catches errors without generating deeper organizational learning. An unverified AI output, regardless of its confident tone, is effectively noise.

Following verification, the critical step of Evaluation comes into play. Here, the question shifts to: "What does this output reveal?" Unlike verification, which measures against established benchmarks, evaluation often involves discovering or refining standards that might not have existed previously. This necessitates deep human domain expertise, as the evaluator’s role transcends simple quality control to encompass discovery – understanding what quality means in the context of novel AI outputs. This evaluation must consider the unprecedented volume, variety, and velocity of AI-generated content. Critically, human bandwidth for nuanced evaluation, not AI access, emerges as the binding constraint.

The final, indispensable step is Learning Capture. This addresses: "How do we ensure this insight persists and is leveraged?" Without robust capture mechanisms, valuable insights gained during evaluation evaporate after each interaction, preventing knowledge from compounding. Learning capture transforms individual insights into institutional knowledge, manifesting as updated prompts, refined criteria, documented best practices, and shared repositories of successful (and instructively unsuccessful) interactions. It functions as a form of version control for organizational judgment. Without it, evaluation becomes a one-time event, and the cycle of continuous improvement stalls.

These three steps form a dynamic flywheel. Enhanced verification yields cleaner signals for evaluation. More rigorous evaluation generates richer material for capture. And superior capture refines the criteria used in subsequent rounds of verification. This continuous cycle is the essence of compounding. A significant dividend of this process is the explicit articulation of tacit knowledge. When experts are prompted to formalize their judgment into written standards or refined prompts, the gap between AI output and desired outcome surfaces unspoken expertise, making it accessible to colleagues and future AI models alike. For instance, at Anthropic, Boris Cherny’s team empowers Claude to self-verify using test suites before human review, and then runs multiple Claude instances to generate diverse sub-agent evaluations (e.g., one checking style, another hunting bugs). Crucially, a CLAUDE.md file captures all mistakes, corrections, and design principles within the workflow, ensuring each new session inherits the learnings of its predecessors.

The implications for leaders across all business functions are profound. A marketing team using GenAI for campaign briefs first performs verification (e.g., checking brand consistency, factual accuracy, regulatory compliance). This is fast and automatable. Next, evaluation asks what the brief reveals: Did the AI uncover novel customer insights? Did it miss emotional nuances? Are these insights truly actionable? These judgments demand a senior strategist, not a checklist. Finally, learning capture ensures that the strategist’s corrective feedback (e.g., "Our brand leads with customer identity, not product features") is codified into shared prompt templates or brief standards for future use. Without capture, the insight is lost; with it, every subsequent brief starts smarter, and the organization begins to build an intelligent marketing agent capability. The moment a CMO or CFO starts building dashboards around these verification, evaluation, and capture metrics, the organization begins to compound its AI investment.

A common pitfall is mistaking verification for comprehensive evaluation. Jaana Dogan, a principal engineer at Google, once tasked a rival AI, Claude Code, with a problem her team had spent months solving. The AI quickly generated a comparable design solution and working prototype. Most managers might simply verify if it "matched" their solution and then accept or reject. Dogan, however, with months of prior expertise, observed, "It’s not perfect and I’m iterating on it." Her deep understanding allowed her to interrogate what the output revealed about the problem, their assumptions, and previously unarticulated nuances. This critical distinction underscores that AI compresses implementation, but not the arduous formation of human expertise. Deploying AI first in domains where deep human expertise already exists is crucial, not because AI needs hand-holding, but because nuanced evaluation requires someone capable of discerning what "not perfect" truly signifies and what iterative learning might unveil. The expert as evaluator is not a transitional role; it is a permanent fixture in the compounding cycle. However, Dogan’s insights remain siloed unless infrastructure exists to convert her individual judgment into shared, persistent organizational knowledge. Most organizations possess experts, but lack the systemic machinery to make compounding automatic rather than incidental.

Building this capability requires a minimum of five strategic moves for leaders. First, preserve your company’s evaluation expertise. As AI takes over production, the value of human domain expertise shifts to evaluation. Organizations must resist the temptation to let this expertise atrophy, instead nurturing and reskilling employees to become adept evaluators. Second, build verification mechanisms. While software verification can be cheap, other domains, like strategic planning, face high verification costs. The smart approach is "minimally viable verification"—the cheapest credible check that an AI output is not demonstrably wrong, perhaps using multi-judge systems or consistency checks. Third, institute evaluation practices. After every significant AI interaction, users should be prompted to ask: What worked? What failed? And critically, what was interestingly wrong in a way that revealed new insights about the problem? Integrating these questions into existing workflows is key to surfacing tacit knowledge. Fourth, create capture systems. Evaluation without capture is ephemeral. This requires lightweight infrastructure like decision journals, annotated prompt repositories, and searchable evaluation logs that prioritize retrievability over exhaustive documentation. Whether it’s a marketing team’s prompt library or a finance team’s annotated model log, every function can build its equivalent of CLAUDE.md. Discipline, not cost, is the primary constraint. Finally, measure the cycle, not just the output. Traditional metrics like "tools adopted" or "hours saved" are consumption metrics. Organizations seeking compound returns must measure the learning cycle: How many interactions were verified, evaluated, and led to captured learning? How quickly did this captured learning influence subsequent practices? If leaders aren’t learning measurable new insights from AI interactions week-over-week, the compounding cycle is not truly running.

In essence, leaders must shift their fundamental inquiry. Instead of asking, "How do we produce faster, better, cheaper with AI?", the more potent question becomes, "How do we systematically and rapidly learn from what AI produces?" In the era of generative AI, productivity is no longer solely defined by output per unit of input; it is increasingly determined by measurable learning per unit of interaction. Organizations that proactively build the machinery to run this continuous cycle—verify, evaluate, capture, apply—will progressively build an unparalleled capability, transforming AI from a mere tool into a strategic asset that appreciates over time. Those that fail to institutionalize this learning will consume AI without converting it into lasting knowledge, remaining busy but ultimately falling behind in the race for compound benefits. Jaana Dogan’s succinct observation, "It’s not perfect and I’m iterating on it," perfectly encapsulates this profound shift: a recognition of usable output, a deep evaluation of its revelations, and an unwavering commitment to iterative learning that fuels the compounding cycle for any organization willing to construct its enabling infrastructure.