The rapid proliferation of artificial intelligence across industries has brought into sharp focus not just its transformative potential but also the profound ethical challenges it poses. As AI systems increasingly permeate daily life, from consumer electronics to critical infrastructure, the imperative for responsible development has escalated from a theoretical discussion to a practical governance priority for multinational corporations. At the forefront of this evolution is Sony, which initiated its AI ethics journey as early as 2018, recognizing the need to embed responsible practices into its diverse portfolio spanning creative entertainment and advanced technology. Alice Xiang, Sony’s global head of AI governance and lead research scientist for AI ethics at Sony AI, spearheads the strategic shift from merely outlining ethical principles to operationalizing robust governance frameworks that ensure responsible AI integration across all business units.

The core challenge in deploying ethical AI lies in the data that fuels these intelligent systems. Historically, the deep learning revolution was propelled by massive datasets, often scraped from the web without explicit consent or fair compensation for the individuals whose information was collected. This practice, while enabling rapid technological advancement, created a foundational vulnerability: inherent biases within these datasets. These biases, if left unchecked, manifest as discriminatory or inaccurate performance in AI models, particularly for underrepresented demographic groups. The consequences range from minor inconveniences, such as a facial recognition system failing to unlock a phone for certain skin tones, to severe injustices, including erroneous financial assessments, wrongful arrests due to flawed surveillance systems, or biased medical diagnoses. Such failures erode public trust, incur significant reputational damage, and expose companies to substantial legal and regulatory risks, with analysts estimating that biased AI systems could cost industries billions annually in lost productivity and litigation.

Recognizing this critical gap, Alice Xiang’s team embarked on a ambitious project to develop a solution that would empower practitioners to evaluate and mitigate bias effectively. The culmination of this effort is the Fair Human-centric Image Benchmark (FHIBE), a publicly available, ethically sourced dataset designed specifically for assessing bias in computer vision models. FHIBE represents a significant departure from previous industry norms, directly addressing the scarcity of high-quality, responsible data for fairness evaluation. The initiative underscores a fundamental realization: measuring fairness, while conceptually complex, is an indispensable first step before any meaningful mitigation strategies can be applied. Without a robust and unbiased yardstick, efforts to "fix" AI systems remain largely speculative.

FHIBE’s methodology is anchored in two foundational pillars: rigorous ethical sourcing and comprehensive diversity. Ethical sourcing involves securing appropriate consent and providing fair compensation to all participants whose data is included in the benchmark. This goes beyond mere compliance, establishing a new standard for data collection that respects individual rights and autonomy. Participants grant explicit permission for their data to be used and retain control over its application, a stark contrast to the often opaque practices of web scraping. Complementing this, FHIBE prioritizes unparalleled diversity. Unlike many existing datasets that inadvertently skew towards specific demographics, often with light skin tones or limited geographical representation, FHIBE incorporates self-reported demographic information from a globally diverse participant base. This crucial detail minimizes reliance on third-party annotators guessing sensitive attributes like ancestry or gender, thereby enhancing accuracy and reducing the potential for secondary biases. Furthermore, the dataset includes extensive annotations detailing environmental factors, physical attributes, and camera specifications, allowing developers to conduct granular analyses and diagnose the underlying causes of performance disparities, such as issues related to contrast or lighting conditions that might disproportionately affect certain skin tones.

The release of FHIBE challenges what Alice Xiang describes as "data nihilism"—a pervasive sentiment that the widespread collection and use of personal data by powerful AI models inevitably leads to a forfeiture of individual data rights and control. This fatalistic view suggests a dichotomy where advanced technology can only exist at the expense of privacy and ethical considerations. FHIBE serves as a tangible counter-argument, demonstrating that it is indeed possible to develop cutting-edge AI technologies while upholding stringent ethical standards for data sourcing. While acknowledging that ethical data collection is inherently more difficult and expensive, the project proves that such endeavors are feasible and necessary. By providing a proof-of-concept, Sony aims to inspire the broader AI community to invest in scalable solutions that prioritize data rights and responsible development practices, moving beyond the "cat’s out of the bag" mentality.

The impact of FHIBE extends beyond mere evaluation, empowering AI developers with a suite of mitigation strategies. Once biases are accurately identified using the benchmark, teams can explore various avenues for improvement. Technically, this might involve refining a model’s loss function to optimize for more balanced performance across different groups during training. Non-technically, insights from FHIBE can inform critical deployment decisions, such as restricting the use of a model in environments where it is known to perform poorly (e.g., specific lighting conditions) or implementing device-level mitigations, like integrating a flashlight to enhance visibility before a task is executed. This holistic approach, considering the model within its real-world operational context, is vital for truly ethical AI deployment. Internally, Sony has already integrated FHIBE into its computer vision development processes across various business units, enabling proactive bias assessment and the formulation of targeted mitigation strategies before products reach consumers.

While FHIBE primarily addresses human-centric computer vision—a particularly sensitive domain due to its biometric and personally identifiable information—its underlying principles are broadly applicable to other AI modalities. Voice and sound recognition, for instance, face similar challenges regarding consent for recordings, intellectual property rights, and the need for diverse linguistic and accent representation. The absence of comprehensive, ethically sourced datasets can lead to AI systems that consistently misinterpret or fail to recognize certain accents, creating barriers to access and reinforcing existing inequalities. FHIBE, by establishing a robust framework for ethical data collection and diversity, offers a template that can be adapted and expanded for these other modalities, even if the specific implementation details require further research and development. The core concepts of consent, compensation, privacy, IP protection, diversity, and fairness remain universal.

Alice Xiang’s career trajectory, moving from economic policy and early experiences with biased machine learning models to leading AI ethics at Sony, underscores the evolving understanding of this field. Her journey highlights the "catch-22" often faced by practitioners: the need to collect seemingly sensitive demographic data to accurately assess and address algorithmic bias, which can clash with privacy concerns. Her work at the Partnership on AI revealed that despite academic emphasis on complex metrics, the most significant barrier for companies was the practical inability to obtain ethically sourced demographic data for fairness assessments. FHIBE directly confronts this problem, providing a concrete, actionable solution rather than just advocating for change.

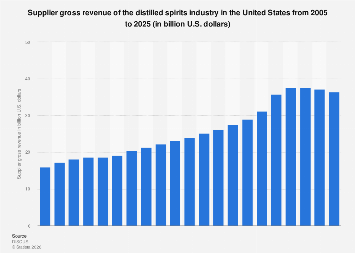

The broader industry faces a critical juncture. While a growing number of companies express a desire to develop ethical AI, the practical implementation remains slow. The temptation to rely on readily available, albeit problematic, datasets often outweighs the investment required for ethical sourcing, especially in the absence of stringent regulatory mandates. FHIBE aims to be an industry benchmark, not just for fairness evaluation but for elevating data collection standards across the board. Its widespread adoption, already evidenced by downloads from over 60 diverse institutions globally within weeks of its release, signals a growing recognition of this need. By making it easier for developers to "do the right thing," Sony hopes to foster an environment where responsible AI development becomes an ingrained industry standard, leading to more trustworthy, equitable, and ultimately more successful AI applications worldwide. This ongoing effort is a testament to the fact that progress in responsible AI is not about finding a single magical solution, but rather about a continuous commitment to incremental improvements and systemic changes, one ethically sourced dataset at a time.