Despite the breathtaking pace of advancements in artificial intelligence, particularly with the proliferation of generative AI models, a discernible chasm persists between technological hype and tangible macroeconomic impact. Leading economists are increasingly questioning whether this transformative technology is genuinely translating into the widespread productivity gains anticipated by many. This skepticism stems from a deeper analysis of AI’s current developmental trajectory, suggesting that human choices, rather than an inherent technological imperative, are dictating an outcome that may favor automation and centralization over human augmentation and shared prosperity.

The prevalent narrative often portrays AI as an unstoppable force destined to unleash unprecedented wealth and efficiency. However, Nobel laureate Daron Acemoglu, an Institute Professor at MIT, argues compellingly that technology’s future is not preordained. Drawing insights from his extensive work, including the book Power and Progress, Acemoglu posits that society possesses significant agency in shaping AI’s evolution. The critical juncture lies in choosing between two fundamentally different paths: one that prioritizes replacing human labor through automation and centralizing information, and another that focuses on complementing human skills, creating new tasks, and fostering a more decentralized, collaborative technological landscape.

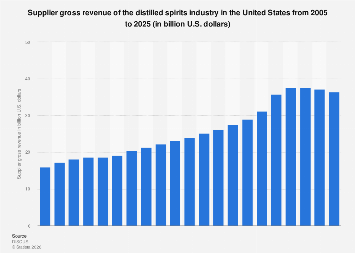

Current trends indicate a strong gravitational pull towards the automation agenda. Developers and corporations, driven by existing economic incentives, frequently aim for Artificial General Intelligence (AGI) – systems capable of performing a wide array of cognitive tasks at or beyond human levels. While automation can eliminate routine, tedious, and even dangerous tasks, its primary economic effect is often to substitute capital for labor. This typically benefits capital owners and shareholders through reduced operational costs and increased output per employee, but it can lead to stagnant wages, job displacement, and growing inequality for a significant portion of the workforce. For instance, industries from manufacturing to customer service have seen AI-driven robotics and sophisticated algorithms take over roles, leading to concerns about the future of employment in traditional sectors. Data from the OECD consistently highlights a slowdown in aggregate productivity growth across many developed economies since the mid-2000s, even as digital technologies, including early forms of AI, became more pervasive—a phenomenon sometimes likened to the "Solow Paradox" of computing’s early days.

Conversely, an alternative vision for AI centers on human complementarity and the generation of entirely new tasks. This approach views AI not as a replacement, but as a powerful tool to augment human capabilities, enabling individuals to perform more complex, sophisticated, or novel functions. Imagine an electrician equipped with an AI tool that can instantly access vast databases of equipment schematics, diagnostic histories, and best practices for unprecedented or rare malfunctions. Or a nurse utilizing AI to sift through patient data and medical literature, identifying subtle patterns to assist in early disease detection or personalized treatment plans, without dictating the final decision. These applications leverage AI’s strengths in data processing and pattern recognition to enhance human judgment, creativity, and problem-solving, rather than supplanting them. Such "new tasks" have historically been powerful drivers of both productivity and wage growth, creating entirely new occupations and raising the skill ceiling for existing ones.

The current developmental trajectory of AI is also characterized by a significant move towards information centralization. Large Language Models (LLMs), for example, are designed to aggregate and process vast quantities of human knowledge, providing centralized answers. While powerful, this approach contrasts sharply with early aspirations for computing, which envisioned decentralized access to information and tools empowering individuals. The dominance of a few major technology corporations in AI development, with their massive computing resources and proprietary data sets, reinforces this centralization. This creates potential risks related to data monopolies, algorithmic bias, and reduced individual agency, as decision-making processes become increasingly opaque and controlled by powerful entities.

The primary driver behind this automation and centralization bias is a set of entrenched economic incentives. Leading AI companies often operate on business models that prioritize efficiency gains through automation and scale, aiming to capture significant market share by offering solutions that reduce labor costs. For startups in the AI ecosystem, the ultimate goal is frequently acquisition by these larger tech giants, aligning their innovation efforts with the established priorities of the dominant players. This venture capital-driven model, coupled with the "winner-take-all" dynamics of the digital economy, tends to funnel investment towards general-purpose, scalable automation technologies rather than bespoke, human-centric augmentation tools that might have a slower, more distributed return on investment. Without a deliberate shift in these incentives, the path of least resistance will continue to be one of capital-intensive automation.

A critical limitation of current AI models, particularly in applications where human lives or well-being are at stake, is their reliability. While impressive in many domains, generative AI tools are prone to "hallucinations" – producing factually incorrect or nonsensical information with high confidence. In fields like medicine, where even a one-in-a-thousand error rate could be catastrophic, this unreliability renders many automation or even direct augmentation applications untenable without significant human oversight. The development of truly robust, domain-specific AI that can consistently provide accurate and trustworthy information for professionals like nurses or electricians requires not only specialized training on high-quality, curated data but also potentially different architectural approaches than current general-purpose LLMs. Furthermore, the absence of clear property rights for data and well-functioning data markets impedes the creation and sharing of the granular, context-rich datasets necessary for highly reliable, specialized AI tools.

The "productivity paradox" of the digital age continues to confound economists. Despite the explosion of patents, new applications, and faster technological cycles, aggregate productivity growth rates in many advanced economies remain stubbornly low, often below those of the "boring" pre-digital decades of the mid-20th century. While some argue this is purely a measurement problem – an inability to accurately capture the quality improvements and intangible benefits of digital services in traditional GDP metrics – Acemoglu suggests it’s more profound. While certain gains, like those from antibiotics or electrification in earlier eras, had clear, measurable impacts on life expectancy or industrial output, AI’s broad economic dividends are less evident. Over-reliance on automation that merely replaces existing tasks, rather than creating new ones or significantly enhancing human output, might be a contributing factor to this muted impact.

To redirect AI towards a more socially beneficial and economically productive future, a multifaceted approach involving proactive regulation and a shift in individual and corporate priorities is essential. Regulation, rather than merely being reactive to emerging risks, should actively shape the innovation landscape. This could involve policies such as research and development tax credits for AI projects focused on human-complementary technologies, antitrust measures to curb market concentration among tech giants, and robust data governance frameworks that incentivize the creation of high-quality, domain-specific datasets while protecting privacy. Such proactive policies could level the playing field, making human-centric AI development economically viable and attractive.

Ultimately, the future of AI rests on collective choices. Engineers and scientists within leading corporations possess the expertise to steer research towards pro-worker and pro-human technologies. Entrepreneurs, rather than solely aiming for acquisition by large incumbents, could be incentivized to build independent ventures focused on decentralized, empowering AI solutions. As individuals, understanding the fundamental trade-offs and advocating for policies that promote broad-based benefits can create the critical mass necessary for change. The promise of AI is immense, but its realization as a force for genuine progress and widespread prosperity hinges on a conscious decision to design and deploy it not to replace humanity, but to empower it, fostering an era of augmented intelligence and shared economic dynamism.