The corporate landscape is littered with cautionary tales where the pursuit of narrowly defined metrics has led to unintended consequences, eroding trust, damaging brand reputation, and undermining long-term strategic objectives. One prominent example, the Wells Fargo scandal of 2016, starkly illustrated this phenomenon: employees, under immense pressure to meet aggressive sales quotas, resorted to creating millions of fraudulent customer accounts. This was not merely a lapse in ethics but a profound failure in performance measurement, where a metric—the number of financial products sold per customer—became detached from its original purpose of genuine customer value creation. This systemic issue, often encapsulated by Goodhart’s Law ("When a measure becomes a target, it ceases to be a good measure"), plagues organizations globally, causing leaders to inadvertently foster gaming behaviors, ethical compromises, and a myopic focus that ultimately hinders sustainable business performance.

Despite decades of academic discourse and practical warnings against metric fixation, the challenge persists. Traditional remedies, such as the implementation of balanced scorecards or multi-faceted Key Performance Indicators (KPIs), frequently fall short because they remain susceptible to the same vulnerabilities: narrow optimization and gaming in the absence of stringent, dynamic oversight. The financial and reputational costs of these flawed systems are substantial. A 2023 report by Gartner estimated that poor data quality and management, often exacerbated by misaligned KPIs, costs businesses an average of $15 million annually. Beyond direct financial losses, the erosion of employee morale, customer loyalty, and market trust can inflict long-term damage, making the imperative for a more sophisticated approach to performance measurement clearer than ever.

A paradigm shift is emerging from an unexpected quarter: the realm of artificial intelligence and machine learning research. Leaders are increasingly conceptualizing organizations as complex systems designed for optimization towards specific outcomes, a perspective that mirrors how machine learning engineers train algorithms. In both contexts, the challenge lies in optimizing proxy measures that can diverge from the true, underlying goals. While organizations are composed of individuals with intricate motivations and agency—a crucial distinction from algorithms—the frameworks developed to ensure AI models remain robust and avoid "overfitting" offer profound insights for human-centric performance systems. These techniques are not mechanistic solutions but rather conceptual blueprints requiring thoughtful human adaptation.

One foundational principle from AI that translates directly to robust KPI design is the diversification of measurement inputs, akin to an ensemble learning approach. In machine learning, relying on a single feature or a simple model often leads to poor generalization. Similarly, a singular organizational KPI, like "sales volume," encourages a tunnel vision that ignores other critical dimensions such as customer lifetime value, product quality, or market penetration. Instead, organizations should construct a "balanced portfolio" of indicators. This involves combining leading and lagging indicators, quantitative and qualitative data, and internal and external perspectives. For instance, alongside new customer acquisitions, a company might track customer satisfaction scores, referral rates, average revenue per user, and product engagement metrics. This multi-dimensional approach makes it significantly harder for individuals or teams to game the system without simultaneously improving genuine performance across several critical axes, fostering a more holistic and sustainable drive towards strategic objectives.

A second crucial strategy derived from AI is the integration of regularization and penalty functions. In machine learning, regularization techniques are employed to prevent models from overfitting the training data, ensuring they generalize well to new, unseen data. This is achieved by adding a penalty term to the loss function for model complexity or extreme parameter values. Applied to organizational KPIs, this means introducing explicit "costs" or negative incentives for behaviors that, while seemingly optimizing a single metric, detract from broader organizational health or ethical standards. For example, if a sales team is incentivized by transaction volume, a regularization penalty could be applied based on the subsequent customer churn rate or the number of customer complaints. This mechanism discourages "short-termism" and ensures that optimization efforts are aligned with long-term value creation and responsible conduct. This also extends to embedding ethical guardrails directly into performance frameworks, making it costly to achieve targets through means that compromise stakeholder trust or regulatory compliance.

The third strategy emphasizes adaptive and iterative performance frameworks, mirroring the continuous learning cycles of advanced AI systems. Stagnant KPIs are inherently vulnerable to obsolescence and gaming. As market conditions, competitive landscapes, and customer expectations evolve, so too must the metrics used to gauge performance. AI models are continually retrained and refined with new data, allowing them to adapt to changing patterns. Similarly, organizations should implement dynamic KPI systems with regular review cycles, automated feedback loops, and mechanisms for recalibration. This could involve A/B testing different metric formulations to assess their effectiveness in driving desired behaviors without adverse side effects. Furthermore, predictive analytics can be leveraged to anticipate future performance trends and proactively adjust targets or introduce new indicators, ensuring that the measurement system remains relevant and forward-looking, rather than a backward-looking report card.

Finally, the fourth strategy from AI research calls for a focus on latent objectives and true value drivers, moving beyond easily observable proxy metrics. Machine learning often deals with inferring complex, unobservable variables (latent variables) from observable data. For instance, customer "engagement" might be a latent variable inferred from clicks, time spent on a page, repeat visits, and social shares, rather than simply measuring "number of clicks." In business, the true goal might be "sustainable innovation" or "deep customer loyalty," which are poorly captured by simple output metrics like "number of new products launched" or "customer retention rate." By employing advanced analytical techniques, including AI models, organizations can identify and measure these multi-factor latent variables that represent genuine value creation. This approach encourages a deeper understanding of causal relationships and systemic impacts, rather than merely optimizing for correlational proxies, thereby directing efforts towards activities that genuinely enhance strategic outcomes.

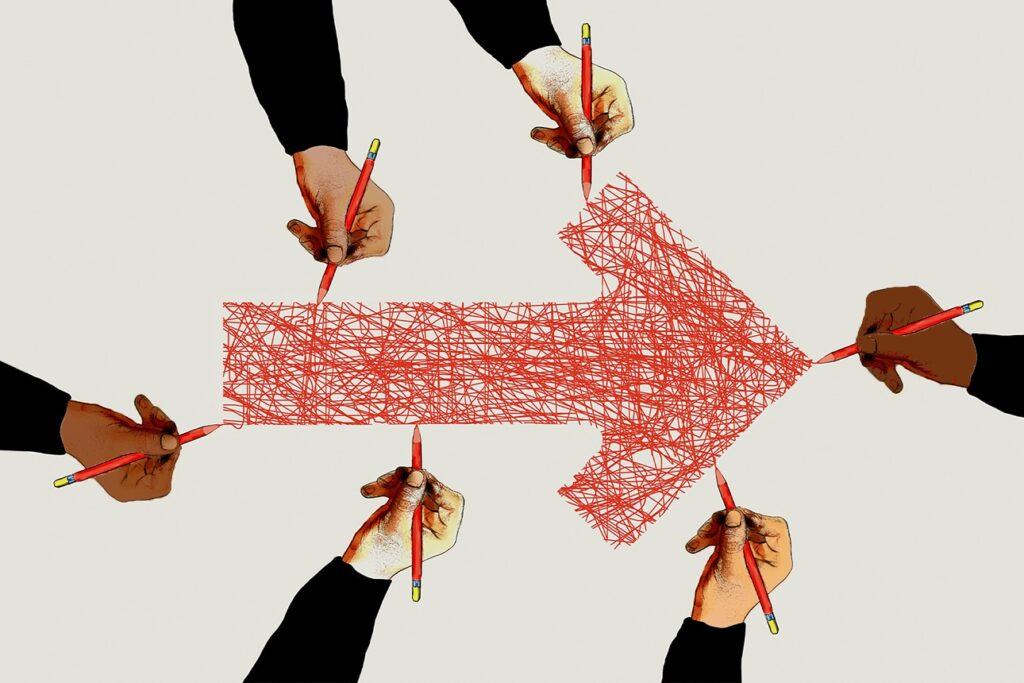

Implementing these AI-inspired frameworks necessitates more than just a conceptual shift; it requires significant organizational commitment. Leadership must champion a culture of intellectual honesty regarding data and performance, valuing genuine learning over superficial metric attainment. This involves investing in robust data infrastructure, advanced analytics platforms, and upskilling human capital in data science and organizational behavior. Cross-functional teams comprising data scientists, economists, behavioral psychologists, and business leaders will be crucial in designing, validating, and continuously refining these sophisticated performance systems. The global trend towards data governance and ethical AI further underscores the need for transparent and fair measurement systems that incorporate these principles.

Ultimately, by drawing lessons from the rigorous discipline of artificial intelligence, businesses can move beyond the pitfalls of Goodhart’s Law. This intelligent approach to KPI design promises not just to prevent gaming but to cultivate organizational resilience, foster ethical conduct, and drive sustained, genuine value creation in an increasingly complex and data-saturated world. It represents an evolution from merely measuring what is easy to measure, to strategically measuring what truly matters for long-term success and societal contribution.