The global landscape of artificial intelligence is undergoing a fundamental structural transition, moving beyond the linguistic capabilities of chatbots toward a sophisticated understanding of the physical world. At the vanguard of this shift, Alibaba Cloud has led a significant 2 billion yuan ($290 million) Series B investment in ShengShu, a Beijing-based startup specializing in "world models" and advanced video generation. This capital infusion, which includes participation from Baidu Ventures and TAL Education, marks a pivotal moment in the evolution of generative AI, signaling that the industry’s most influential players believe the limitations of large language models (LLMs) are becoming increasingly apparent.

For the past two years, the AI narrative has been dominated by the success of LLMs like OpenAI’s ChatGPT and Alibaba’s own Qwen. While these models have demonstrated an uncanny ability to process and generate human-like text, they remain essentially "disembodied" intelligences. They understand the syntax of language but lack a fundamental grasp of physical causality—the way an object falls, the resistance of a surface, or the fluid dynamics of water. As the race for Artificial General Intelligence (AGI) intensifies, the consensus among researchers is shifting: to create truly intelligent systems, AI must be grounded in the laws of physics.

ShengShu, a three-year-old firm that has rapidly ascended the ranks of China’s "AI Tigers," aims to bridge this gap. The company is the architect behind Vidu, a high-performance video generation tool that has consistently ranked among the top global models for text-to-video and image-to-video synthesis. Unlike earlier iterations of video AI that merely "stitched" images together, ShengShu’s underlying architecture is designed to function as a general world model. By training on multimodal data—encompassing vision, audio, and eventually tactile feedback—these models attempt to simulate the complexities of the three-dimensional world.

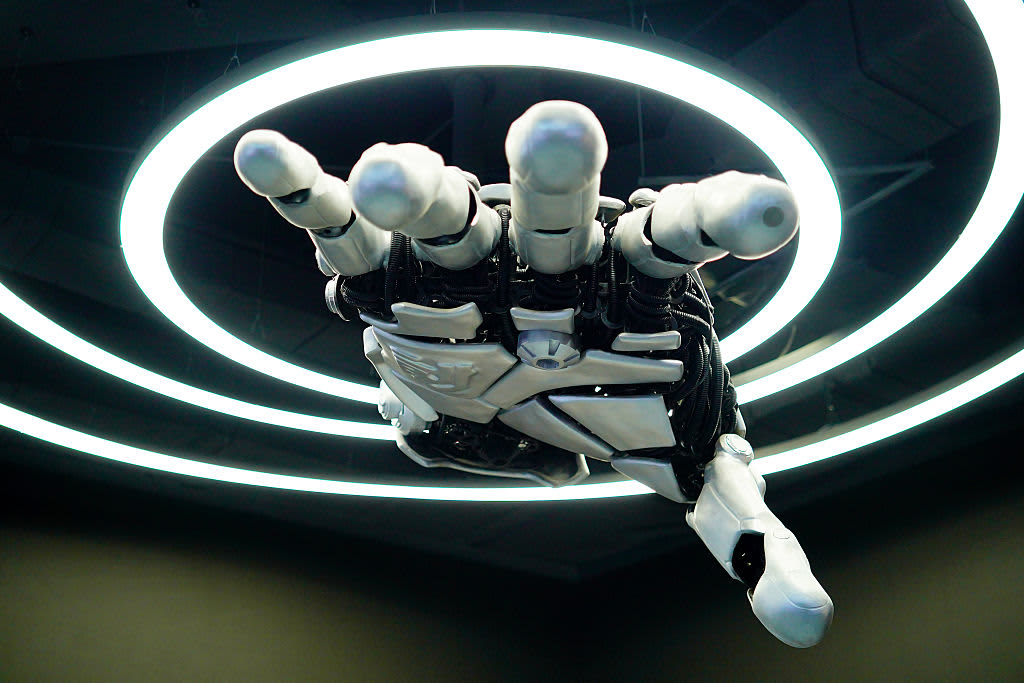

The strategic importance of this investment cannot be overstated. By leading this round, Alibaba is diversifying its AI portfolio away from purely communicative tools and toward "embodied AI." This term refers to systems that can interact with the physical environment, such as humanoid robots and autonomous vehicles. For a robot to navigate a warehouse or for a self-driving car to predict a pedestrian’s movement, it requires more than the ability to predict the next word in a sentence; it requires a predictive model of physical reality. ShengShu’s founder, Zhu Jun, has articulated a vision where AI systems "connect perception and action," allowing for consistent, predictable behavior in real-world scenarios.

This trend is not occurring in a vacuum. The broader Chinese technology ecosystem is locked in a fierce domestic and international competition to define the next generation of visual synthesis. ByteDance, the parent company of TikTok, has released its own sophisticated video tools, while Kuaishou has gained significant traction with its Kling model. Globally, the benchmark remains OpenAI’s Sora, although its availability has been subject to strategic delays and cost management. By fostering startups like ShengShu, Alibaba is ensuring that the Chinese AI sector remains competitive, even as geopolitical tensions create barriers to accessing certain Western high-end hardware and software ecosystems.

Alibaba’s investment strategy over the past year reveals a calculated bet on the convergence of AI and physical space. In recent months, the e-commerce and cloud giant has led a $60 million round for PixVerse, a startup whose world model allows users to exert real-time "direction" over video generation, and a $50 million investment in Tripo AI, which specializes in the rapid conversion of 2D photographs into high-fidelity 3D digital models. Furthermore, Alibaba has open-sourced its own robotics-centric AI model, RynnBrain, illustrating a multi-pronged approach that combines proprietary investment with ecosystem-wide platform development.

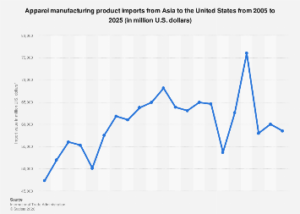

The economic implications of this shift are profound. As AI moves from the screen into the physical world, the total addressable market expands from digital services to the entirety of the industrial and manufacturing sectors. The integration of world models into "embodied" systems is expected to revolutionize industrial automation, healthcare robotics, and logistics. According to recent market analysis, the global market for embodied AI and smart robotics is projected to grow at a compound annual growth rate (CAGR) of over 20% through 2030, as companies seek to mitigate labor shortages and increase operational efficiency through high-level automation.

However, the transition from LLMs to world models is fraught with technical and computational challenges. Kevin Kelly, the co-founder of Wired and a prominent technology theorist, has noted that human-level intelligence requires a triad of capabilities: reasoning, physical understanding, and continuous learning. While LLMs have arguably addressed the reasoning and knowledge components, the understanding of the physical world remains the "missing link." Training a world model requires vastly more computational power and more diverse datasets than training a text model. While text is relatively low-bandwidth data, high-definition video is data-intensive, requiring specialized "spatial-temporal" architectures to process the relationship between objects across time and space.

ShengShu’s latest model, the Vidu Q3 Pro, represents a significant step toward solving these issues. By achieving high rankings on independent leaderboards like Artificial Analysis, the startup has demonstrated that it can compete with the best-funded labs in Silicon Valley. The company’s ability to raise 2.6 billion yuan in total funding within a short period reflects the high degree of investor confidence in its technical trajectory. For Alibaba, ShengShu serves as both a strategic partner and a testbed for integrating these advanced capabilities into the Alibaba Cloud infrastructure, potentially offering world-modeling-as-a-service to global clients.

Furthermore, the participation of TAL Education in the funding round highlights the cross-industry appeal of these models. In the educational sector, world models can be used to create immersive, physics-accurate simulations for science and engineering students. In the gaming and entertainment sectors, these tools can automate the creation of complex environments, reducing the time and cost of digital asset production by orders of magnitude. The "general" nature of the world model means that the same underlying technology that helps a robot pick up a glass of water can also be used to generate a cinematic sequence for a film or a simulation for an autonomous drone.

The broader geopolitical context also plays a role in this investment surge. As the United States and China vie for dominance in the semiconductor and AI sectors, the ability to develop home-grown, world-leading models is a matter of national economic security. China’s focus on the "real economy"—manufacturing and physical infrastructure—aligns perfectly with the development of embodied AI. While the West has seen significant capital flow into consumer-facing AI applications, the Chinese strategy appears increasingly focused on the industrial application of these technologies.

As we look toward the latter half of the decade, the distinction between the digital and physical worlds will continue to blur. The investment by Alibaba into ShengShu is more than just a financial transaction; it is a declaration of intent. It suggests that the next frontier of the digital revolution will not be found in better chatbots, but in machines that can see, understand, and interact with the world exactly as humans do. The transition from "Large Language Models" to "General World Models" represents the graduation of artificial intelligence from a sophisticated mimic of human speech to a functional inhabitant of our physical reality. For the global economy, the successful deployment of these models could unlock trillions of dollars in value, fundamentally altering the nature of work, production, and human-machine interaction.