The global semiconductor landscape is currently witnessing a strategic pivot by its most dominant player, as Nvidia prepares to launch a new generation of chips specifically optimized for artificial intelligence "inference." This move marks a critical evolution in the company’s roadmap, shifting focus from the massive, power-hungry clusters used to train large language models (LLMs) to the high-efficiency hardware required to run those models in real-world applications. For nearly two years, Nvidia has enjoyed a near-monopoly on the "training" phase of the AI revolution, but as the industry matures, the economic battlefield is shifting toward the day-to-day execution of AI tasks—a segment where a growing legion of challengers believes the tech giant is finally vulnerable.

To understand the significance of Nvidia’s latest maneuver, one must distinguish between the two primary phases of artificial intelligence development. Training is the computationally intensive process of "teaching" an AI model by feeding it trillions of data points, a task that requires the immense raw horsepower of Nvidia’s flagship H100 and H200 GPUs. Inference, conversely, is the stage where a pre-trained model is put to work—answering a user’s query, generating an image, or analyzing a medical scan. While training happens once (or periodically), inference happens every time a user interacts with an AI tool. Consequently, the long-term market for inference hardware is projected to dwarf the training market as AI integration becomes ubiquitous across enterprise software, consumer electronics, and industrial automation.

Market analysts estimate that the total addressable market for AI accelerators could reach $400 billion by 2027. Currently, Nvidia controls roughly 80% to 95% of the data center AI chip market. However, the hardware requirements for inference differ fundamentally from those of training. While training demands maximum throughput and massive memory bandwidth, inference prioritizes low latency (speed of response) and energy efficiency (cost per query). This technical nuance has opened a window for competitors to design specialized Application-Specific Integrated Circuits (ASICs) that can perform inference tasks faster and at a fraction of the power consumption of Nvidia’s general-purpose GPUs.

The competitive pressure is mounting from three distinct fronts: traditional rivals, agile startups, and Nvidia’s own largest customers. Advanced Micro Devices (AMD) has emerged as the most formidable traditional challenger, with its MI300X accelerator gaining significant traction among cloud providers like Microsoft and Meta. AMD CEO Lisa Su has positioned the company as a high-performance alternative, specifically highlighting the MI300’s superior memory capacity, which is a critical bottleneck for running massive models. Intel is also attempting a comeback with its Gaudi 3 processor, which it claims offers better price-to-performance ratios for inference than Nvidia’s previous generation of hardware.

Simultaneously, a new wave of well-funded startups is attempting to disrupt the status quo with radical architectures. Companies such as Groq, Cerebras, and SambaNova have developed "inference engines" designed to bypass the traditional architectural limitations of GPUs. Groq, in particular, has garnered attention for its Language Processing Units (LPUs), which can generate text at speeds that make Nvidia’s hardware look sluggish by comparison. These startups argue that because Nvidia’s chips are "Swiss Army knives" designed to do everything from gaming to scientific simulation, they are inherently less efficient than a "scalpel" designed specifically for LLM inference.

Perhaps the most existential threat to Nvidia’s dominance, however, comes from the "hyperscalers"—the very companies that have fueled Nvidia’s record-breaking revenues. Amazon (AWS), Google, and Microsoft are all pouring billions into custom silicon. Amazon’s "Inferentia" chips and Google’s Tensor Processing Units (TPUs) are already being used internally to lower the astronomical costs of running AI services. By designing their own chips, these tech giants can optimize the hardware for their specific software stacks, effectively cutting Nvidia out of the value chain. For Nvidia, the launch of a dedicated inference-focused chip is not just an expansion; it is a defensive necessity to prevent its biggest clients from becoming its biggest competitors.

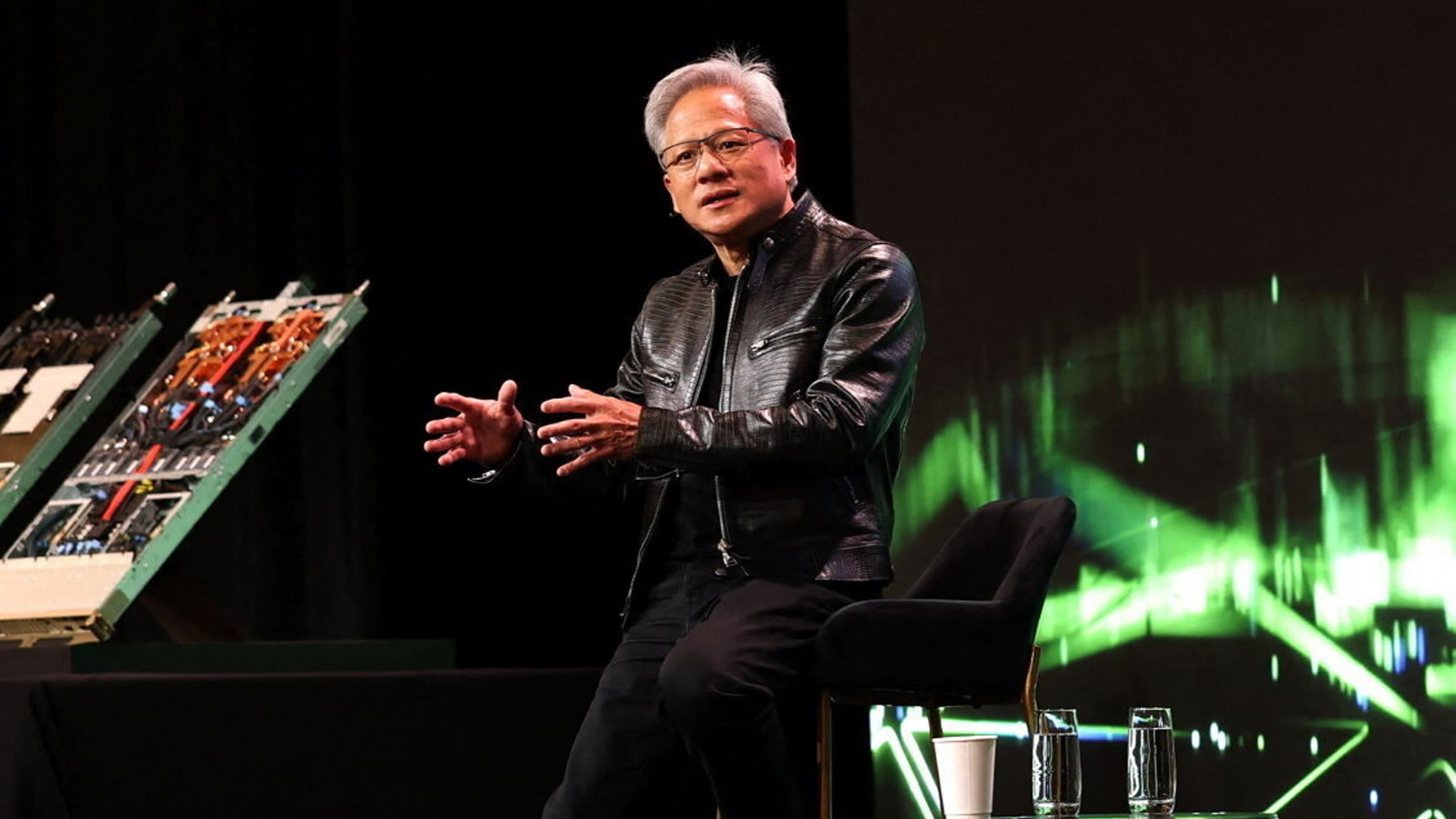

Nvidia’s response centers on its new Blackwell architecture, which CEO Jensen Huang has described as the engine of a new industrial revolution. The Blackwell platform is designed to be a "full-stack" solution, integrating hardware and software to achieve a 30-fold increase in performance for LLM inference workloads compared to the H100. By significantly reducing the energy consumption and cost associated with running models like GPT-4 or Llama 3, Nvidia aims to make it economically unfeasible for customers to switch to alternative hardware.

A key component of Nvidia’s enduring moat is its CUDA software platform. Over the past decade, millions of developers have built their AI applications on CUDA, creating a powerful network effect. While competitors can build faster chips, porting decades of optimized software code to a new architecture is a daunting and expensive task for most enterprises. Nvidia is doubling down on this advantage by releasing "NIMs" (Nvidia Inference Microservices)—pre-packaged software containers that allow businesses to deploy AI models on Nvidia hardware with minimal configuration. This "software-defined hardware" strategy aims to lock customers into the Nvidia ecosystem by making the deployment process as seamless as possible.

The economic implications of this hardware race extend far beyond Silicon Valley. As the cost of inference drops, the "cost per token"—the unit of measurement for AI output—becomes the most important metric in the digital economy. Lowering this cost is essential for the commercial viability of AI agents that can handle complex, multi-step tasks. If Nvidia can successfully drive down the cost of inference, it will accelerate the adoption of AI in sectors like finance, where real-time fraud detection is critical, and healthcare, where rapid genomic sequencing can save lives.

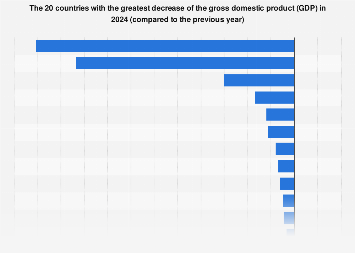

However, the path forward is fraught with macroeconomic and geopolitical challenges. The global supply chain remains a delicate bottleneck, with Nvidia heavily reliant on Taiwan Semiconductor Manufacturing Company (TSMC) for its advanced 4-nanometer and 3-nanometer processes. Any disruption in the Taiwan Strait would have catastrophic consequences for the entire AI industry. Furthermore, tightening U.S. export controls on high-end AI silicon to China has forced Nvidia to design "nerfed" versions of its chips for the Chinese market, creating an opening for domestic Chinese champions like Huawei to gain ground in the world’s second-largest economy.

Investor sentiment also reflects a growing scrutiny of the "AI ROI" (return on investment). While Big Tech companies have spent hundreds of billions on Nvidia hardware, the revenue generated from AI-powered products is still in its nascent stages. If the "inference explosion" does not materialize as quickly as predicted, there is a risk of a massive capital expenditure correction. Nvidia’s pivot to inference-optimized chips is, in many ways, a bet that the demand for AI execution will be more resilient and widespread than the initial burst of model training.

As the industry moves into the second half of 2024 and into 2025, the battle for the inference market will likely define the next decade of computing. Nvidia is no longer just competing against other chipmakers; it is competing against the laws of physics and the bottom lines of the world’s most powerful corporations. By launching hardware specifically tuned for the "working life" of AI models, Nvidia is attempting to prove that its dominance was not a fleeting moment of the training era, but the foundation of a new era of ubiquitous, automated intelligence. Whether its proprietary software and rapid innovation cycles can fend off the specialized efficiency of its rivals remains the multi-trillion-dollar question hanging over the global markets.