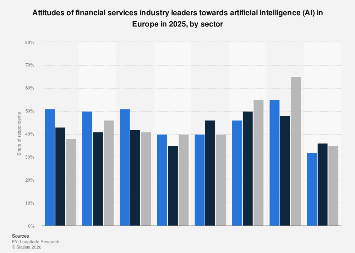

European financial services executives in 2025 found themselves at a critical juncture, grappling with a complex and often contradictory set of perceptions regarding the integration of artificial intelligence (AI) within their respective sectors. While the promise of enhanced efficiency and innovation remains a powerful draw, deep-seated concerns about job displacement, ethical oversight, and consumer trust cast a long shadow over the widespread adoption of this transformative technology. This divergence in sentiment is not uniform, revealing distinct attitudinal fault lines across wealth and asset management, insurance, and the banking and capital markets segments of the continent’s financial ecosystem.

The specter of job losses looms largest in the wealth and asset management domain, where a significant proportion of leaders expressed apprehension about AI’s potential to automate roles currently held by human professionals. This concern, while present across the financial services spectrum, appears to be particularly acute in sectors that rely heavily on personalized client interaction and sophisticated analytical capabilities, which AI is increasingly capable of replicating. In stark contrast, while still a significant worry, the fear of job displacement registers at lower levels within the insurance sector and the broader banking and capital markets segment. This disparity suggests that the perceived immediate impact of AI on human capital varies considerably, influenced by the nature of the work, the level of human interaction, and the existing technological infrastructure within each sub-sector.

Beyond job security, a pervasive worry centers on the potential for AI systems to operate without adequate human supervision and ethical frameworks. A substantial percentage of respondents, varying between sectors, voiced concerns that AI applications might be deployed without the necessary checks and balances to ensure responsible and fair outcomes. This points to a broader industry-wide challenge in establishing robust governance structures capable of overseeing complex AI algorithms, particularly those that influence critical financial decisions, manage sensitive data, or interact directly with customers. The absence of clear regulatory guidelines and industry best practices in this nascent field amplifies these anxieties, leaving many executives hesitant to fully embrace AI without stronger assurances of control and accountability.

Despite these reservations, the operational advantages offered by AI are undeniable and are increasingly being recognized as a compelling reason for its adoption. Leaders within the banking sector, in particular, have identified the automation of routine tasks as a primary use case for AI. This recognition is shared by their counterparts in the insurance industry, where repetitive processes such as claims processing and risk assessment are ripe for AI-driven optimization. The ability of AI to streamline workflows, reduce manual errors, and free up human resources for more strategic endeavors is a powerful incentive, driving investment and exploration in these areas. Furthermore, a notable percentage of banking executives believe that AI significantly enhances the efficiency and effectiveness of technical work, suggesting its potential to revolutionize roles in areas like data analysis, fraud detection, and algorithmic trading.

However, the optimistic outlook on operational efficiencies is tempered by a significant deficit in consumer trust. Across all sectors, a remarkably small proportion of executives believe that consumers have faith in financial institutions to manage AI in their best interests. This sentiment highlights a critical disconnect between the industry’s embrace of AI and the public’s perception of its safety and fairness. For widespread AI adoption to be truly successful, financial firms must not only develop sophisticated technological solutions but also actively work to build and maintain consumer confidence. This requires a concerted effort to ensure transparency in AI decision-making, robust data privacy measures, and clear communication about how AI is being used and the safeguards in place.

The global context for these evolving attitudes is crucial. As European financial institutions deliberate on AI integration, they are doing so within a dynamic international landscape. North American and Asian financial hubs, for instance, have often been at the forefront of AI innovation and adoption. While precise comparative data for 2025 is still emerging, historical trends suggest that markets with a more aggressive venture capital ecosystem and a less stringent initial regulatory approach may have seen faster, albeit potentially riskier, AI deployment. European regulators, often characterized by a more cautious and principles-based approach, are striving to balance innovation with consumer protection and financial stability. This regulatory environment, while potentially slowing down immediate adoption, could ultimately foster more sustainable and trustworthy AI integration in the long run.

The economic implications of these divergent attitudes are profound. Sectors that successfully harness AI for operational efficiencies are likely to gain a competitive edge through cost reductions and improved service delivery. This could lead to market consolidation, with larger, more technologically advanced firms outperforming their peers. Conversely, sectors where concerns about job losses and consumer trust impede adoption may face slower growth and increased pressure to adapt. The potential for AI to create new job categories, such as AI ethicists, data scientists specializing in AI governance, and AI system trainers, offers a counterpoint to job displacement fears, but requires significant investment in workforce retraining and upskilling initiatives.

The banking and capital markets sector, given its scale and the inherent complexity of its operations, is a particularly important bellwether. The enthusiastic embrace of AI for task automation and technical work facilitation in this segment suggests a potential for significant disruption in areas like customer service, back-office operations, and even aspects of trading and risk management. However, the underlying concern about consumer trust remains a critical bottleneck. If banks fail to allay public apprehension about data security, algorithmic bias, and fair treatment, their ability to leverage AI for customer-facing services could be severely limited, potentially driving customers towards less regulated or more transparent FinTech alternatives.

In insurance, the automation of routine tasks like underwriting and claims processing offers a clear path to cost savings and faster service. Yet, the perceived lower levels of job loss concern compared to wealth management might be misleading. The potential for AI to redefine actuarial science, risk assessment, and personalized policy offerings is immense, and while immediate job displacement might be less of a headline concern, the long-term evolution of the insurance workforce will undoubtedly be shaped by AI’s capabilities. The success of AI in this sector will hinge on its ability to enhance rather than replace the empathetic and complex decision-making required in areas like complex claims and personalized financial advice.

Ultimately, the 2025 landscape for AI in European finance is one of careful navigation. The undeniable operational benefits are pushing many firms forward, particularly in banking and insurance. However, the persistent anxieties surrounding job security, ethical oversight, and, most critically, consumer trust, demand a measured and responsible approach. The future success of AI integration will not solely depend on technological prowess but on the industry’s collective ability to build robust governance frameworks, foster transparency, and earn the enduring confidence of the individuals and businesses they serve. The path ahead is one of both immense opportunity and significant challenge, requiring a delicate balance between innovation and responsible stewardship.