For decades, the business of high-end data and professional publishing was considered one of the most resilient sectors in the global economy, characterized by "sticky" subscriptions, high barriers to entry, and the kind of pricing power that made companies like Relx, Wolters Kluwer, and Pearson darlings of the institutional investor class. However, the rapid ascendancy of generative artificial intelligence (AI) has introduced a profound structural tremor into this once-stable landscape. As large language models (LLMs) demonstrate an unprecedented ability to synthesize, summarize, and even generate complex information, the market is beginning to question the long-term defensibility of traditional information "moats." This shift in sentiment has triggered a period of heightened volatility and downward pressure on stock prices, as investors grapple with whether these legacy giants are the next victims of technological disruption or the silent beneficiaries of a new data-hungry era.

The core of the investor anxiety lies in the fundamental shift of how information is consumed and processed. In the pre-AI era, the value proposition of a data provider or academic publisher was built on curation and searchability. Professionals—whether lawyers, doctors, or financial analysts—paid premium prices for access to proprietary databases because the cost of finding that information manually was prohibitively high. Today, AI models trained on vast swaths of the internet can provide immediate, conversational answers to complex queries, often bypassing the need for a user to interact with a specialized search interface. If an AI can provide a "good enough" summary of legal precedents or medical research, the perceived value of a $20,000-a-year subscription to a specialized database begins to erode.

This pressure was most visibly demonstrated in the education and academic sectors. When companies like Chegg, an American education technology firm, admitted that student interest in AI-driven tools like ChatGPT was impacting their subscriber growth, the shockwaves were felt globally. It served as a "canary in the coal mine" moment for the broader industry. Investors quickly began to de-risk their portfolios, leading to a broader sell-off in European and American publishing stocks. Even diversified giants like Relx, which has spent billions transitioning from a traditional publisher to a sophisticated data analytics firm, have seen their valuation multiples come under intense scrutiny. The market is no longer satisfied with steady 5% growth; it is demanding proof that these companies can survive in an environment where "content" is increasingly treated as a commodity by Silicon Valley’s AI labs.

However, the economic narrative is far more nuanced than a simple story of obsolescence. While AI represents a threat to the traditional delivery of information, it also creates an insatiable demand for the very thing these companies possess: high-quality, verified, and structured data. LLMs are notorious for "hallucinations"—generating plausible-sounding but factually incorrect information. For high-stakes industries like healthcare, law, and engineering, the risks of relying on a general-purpose AI are too high. This creates a potential "flight to quality" where the proprietary datasets held by legacy publishers become more valuable as the "ground truth" used to train or refine specialized AI models.

We are already seeing the emergence of a two-tiered market in the publishing world. On one side are the general news and lifestyle publishers who are struggling to protect their content from being scraped by AI bots. On the other side are the strategic players who are leaning into licensing deals. For instance, the partnership between News Corp and OpenAI, valued at over $250 million, signals a new revenue stream where content is sold as "fuel" for AI development rather than just a product for human readers. Similarly, Reddit’s recent IPO success was buoyed significantly by its data-licensing agreement with Google. These deals suggest that while the front-end subscription model may be under pressure, the back-end data-licensing model could offer a lucrative lifeline.

From a macroeconomic perspective, the disruption of the data sector reflects a broader "Paradox of Abundance." As the volume of AI-generated content explodes, the relative value of human-verified, peer-reviewed, and legally sound information should, in theory, increase. However, the transition period is fraught with financial risk. Margin compression is a significant concern; as publishers invest heavily in their own AI capabilities to stay competitive, their capital expenditure rises. Simultaneously, they may be forced to lower subscription prices to retain customers who are tempted by cheaper, AI-powered alternatives. This "pincer movement" on margins is exactly what has institutional investors on edge, leading to the current compression in Price-to-Earnings (P/E) ratios across the sector.

The legal and regulatory environment adds another layer of complexity to the stock performance of these firms. The ongoing litigation between The New York Times and OpenAI/Microsoft serves as a landmark case that could redefine the "fair use" doctrine in the age of AI. If the courts rule that using copyrighted material to train LLMs requires a license, it would create a massive windfall for publishers and data owners, potentially leading to a sharp rally in their stock prices. Conversely, a ruling in favor of the tech giants could effectively strip publishers of their intellectual property rights, leading to a permanent impairment of their business models. Investors are currently pricing in this uncertainty, treating many data stocks as "wait and see" assets.

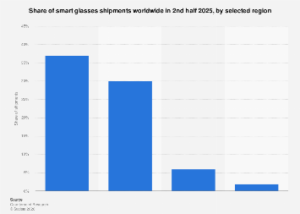

Global comparisons further highlight the uneven impact of this technological shift. In the United States, where the tech sector is dominant, the pressure on traditional publishers has been more acute as they compete directly with the developers of the most advanced LLMs. In Europe, where regulatory frameworks like the EU AI Act are more stringent regarding copyright protections and data transparency, publishers may find a more protective environment. Meanwhile, in Asian markets, particularly China, the integration of AI into data services is moving at a blistering pace, often driven by state-backed initiatives that prioritize technological self-sufficiency over traditional copyright concerns.

The strategic response from industry leaders has been to pivot from being "content providers" to "workflow integrators." Companies like Bloomberg and Wolters Kluwer are not just selling data; they are embedding AI assistants directly into the professional workflows of their clients. BloombergGPT, for example, is a large-scale generative AI model specifically trained on decades of financial data. By creating a tool that general-purpose models cannot easily replicate, they are attempting to rebuild their moats. The success of these internal AI projects will likely be the deciding factor in which stocks recover and which continue to languish.

Ultimately, the pressure on data and publishing stocks is a reflection of a fundamental reordering of the digital economy. The "search-and-click" era, which defined the internet for two decades, is giving way to a "prompt-and-answer" era. For companies that have built their fortunes on the former, the transition is painful and expensive. While the threat of AI is real and has already begun to erode the market capitalization of several industry stalwarts, the long-term outlook is not necessarily terminal. The companies that can successfully navigate the legal minefields, secure high-value licensing deals, and integrate AI to enhance—rather than just replace—their core offerings may emerge from this period of volatility stronger than before. For now, however, the market remains skeptical, and the burden of proof lies squarely on the shoulders of the legacy giants to demonstrate that their data is still worth the premium they charge.