The rapid proliferation of generative artificial intelligence (GenAI) across the technological landscape has irrevocably reshaped expectations for productivity, particularly within the demanding realm of software development. As engineering teams globally embrace AI-powered coding assistants, the promise of accelerated development cycles, reduced boilerplate, and enhanced efficiency is undeniably compelling. Yet, beneath this veneer of innovation lies a growing paradox: while these sophisticated tools can unlock unprecedented velocity, their indiscriminate application, especially within complex existing systems, can inadvertently sow the seeds of future systemic fragility, manifesting as crippling technical debt. This hidden cost, often overlooked in the initial fervor of adoption, threatens to undermine the very gains GenAI promises, posing a significant strategic challenge for businesses aiming to maintain long-term digital resilience.

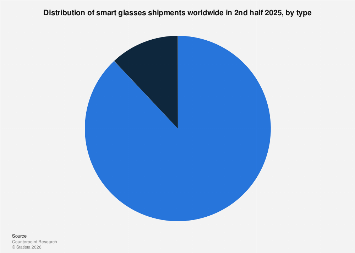

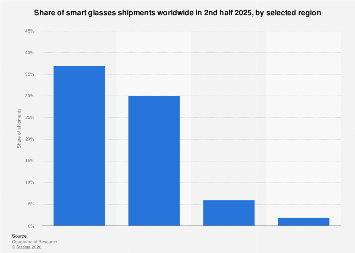

The allure of AI in coding is multifaceted and potent. From intelligent code completion and suggestion engines to automated debugging and refactoring tools, GenAI is being deployed across various stages of the software development lifecycle. Market forecasts indicate a dramatic surge in this sector, with some projections estimating the global AI in software development market to reach tens of billions of dollars within the next five years, driven by the imperative for faster innovation and cost optimization. Early adopters report significant improvements in developer velocity, with some studies suggesting productivity boosts of 20-40% for certain tasks. This efficiency gain is particularly attractive in a competitive global economy where time-to-market can be a decisive factor. The democratizing effect of these tools, enabling developers with varying levels of experience to produce functional code more quickly, further fuels their widespread adoption. However, this ease of generation can mask deeper issues, particularly when AI operates within "brownfield" environments – existing, often intricate, and legacy codebases that are the backbone of many enterprise operations.

Technical debt, colloquially understood as the future work required to address present-day poor-quality code, is a pervasive challenge in software engineering. It accumulates when expedient, short-term solutions are prioritized over robust, well-engineered ones, leading to code that is difficult to understand, maintain, debug, and extend. Historically, this debt has been primarily attributed to human factors: tight deadlines, insufficient resources, or a lack of foresight. GenAI, however, introduces a new, amplified vector for its accumulation. When AI-generated code is integrated into an existing brownfield system, it might not always align with the established architectural patterns, coding standards, or underlying business logic. An AI, operating on statistical patterns and broad training data, lacks the nuanced contextual understanding of a specific enterprise system’s history, dependencies, and future roadmap. This can result in the generation of code that is functional but inefficient, overly complex, redundant, or even introduces subtle incompatibilities that only manifest months or years later during critical operations or updates.

The economic ramifications of escalating technical debt are substantial. Industry analyses consistently peg the global cost of technical debt in the trillions of dollars annually, encompassing increased maintenance expenditures, delayed feature deployments, reduced developer morale, and heightened cybersecurity risks. For a typical enterprise, a significant portion of IT budgets is already consumed by maintaining legacy systems, often exceeding the allocation for new development. The unchecked introduction of AI-generated technical debt can exacerbate this imbalance, diverting even more resources towards remediation rather than innovation. This creates a vicious cycle: the pressure to deliver quickly leads to reliance on AI, which in turn generates debt, slowing future development and increasing pressure. Moreover, code generated without full contextual awareness can inadvertently introduce security vulnerabilities or performance bottlenecks that are difficult to trace and costly to rectify, potentially leading to significant operational disruptions or data breaches.

Navigating this complex landscape requires a strategic and nuanced approach, as articulated by thought leaders in the field. Experts emphasize that the core challenge lies not in the AI tools themselves, but in how organizations integrate and govern their use. The immediate productivity benefits are tangible, but without robust guardrails, the long-term costs can far outweigh these gains. This necessitates a shift in organizational culture and operational directives. Companies must move beyond simply measuring lines of code produced and instead focus on metrics that reflect code quality, maintainability, and architectural integrity.

One critical strategy involves maintaining a "human-in-the-loop" approach, positioning AI as an intelligent assistant rather than an autonomous developer. This means rigorous code reviews become even more paramount, with human engineers critically evaluating AI-generated suggestions for adherence to architectural principles, security best practices, and overall code quality. Organizations like Culture Amp, for instance, have pioneered clear directives and robust guardrails for their engineering teams, delineating permissible uses for AI coding tools and areas where human oversight is non-negotiable, particularly when interacting with core business logic or critical infrastructure. This approach ensures that the expertise and contextual understanding of human developers remain central to the development process, leveraging AI for acceleration while mitigating its potential to introduce hidden flaws.

Furthermore, effective integration of GenAI requires a significant investment in developer training and upskilling. Engineers must learn not only how to effectively prompt AI tools to generate useful code but also how to critically assess, refine, and integrate AI output responsibly. This includes developing a deeper understanding of software architecture patterns, secure coding practices, and the inherent limitations of AI. Tools for static code analysis, automated testing, and continuous integration/continuous deployment (CI/CD) pipelines must be enhanced to identify and flag potential issues introduced by AI-generated code, acting as an additional layer of defense against debt accumulation. Moreover, strategic architectural decisions are crucial; for instance, isolating AI-generated components into well-defined modules with clear interfaces can help contain potential quality issues and simplify future refactoring.

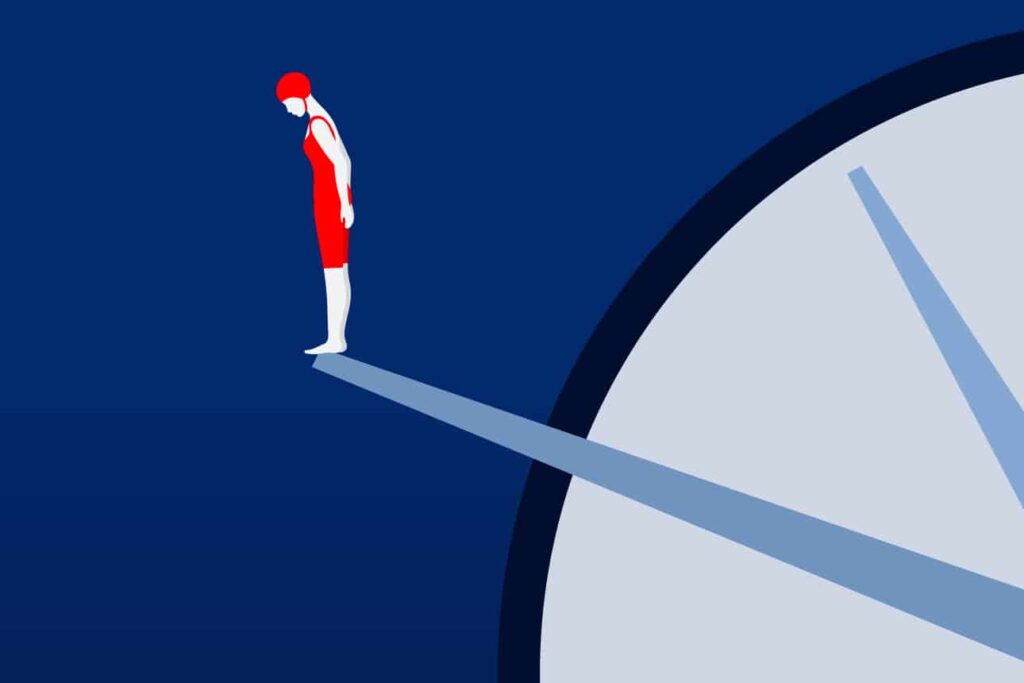

Looking ahead, the economic impact of effectively managing AI-induced technical debt will be a key differentiator in the global technology landscape. Enterprises that successfully harness GenAI’s productivity gains while rigorously managing its quality implications will gain a significant competitive advantage, enabling faster innovation cycles, more robust systems, and a more efficient allocation of resources. Conversely, those that fall into the productivity trap, allowing technical debt to proliferate unchecked, risk falling behind due to ballooning maintenance costs, stagnant development, and increasing vulnerability to system failures. The future of software development is undoubtedly AI-augmented, but its long-term sustainability hinges on a disciplined, forward-thinking approach that prioritizes code quality and architectural soundness alongside speed. The imperative for businesses is clear: embrace GenAI’s potential, but do so with an acute awareness of its hidden costs and a proactive strategy to mitigate them.