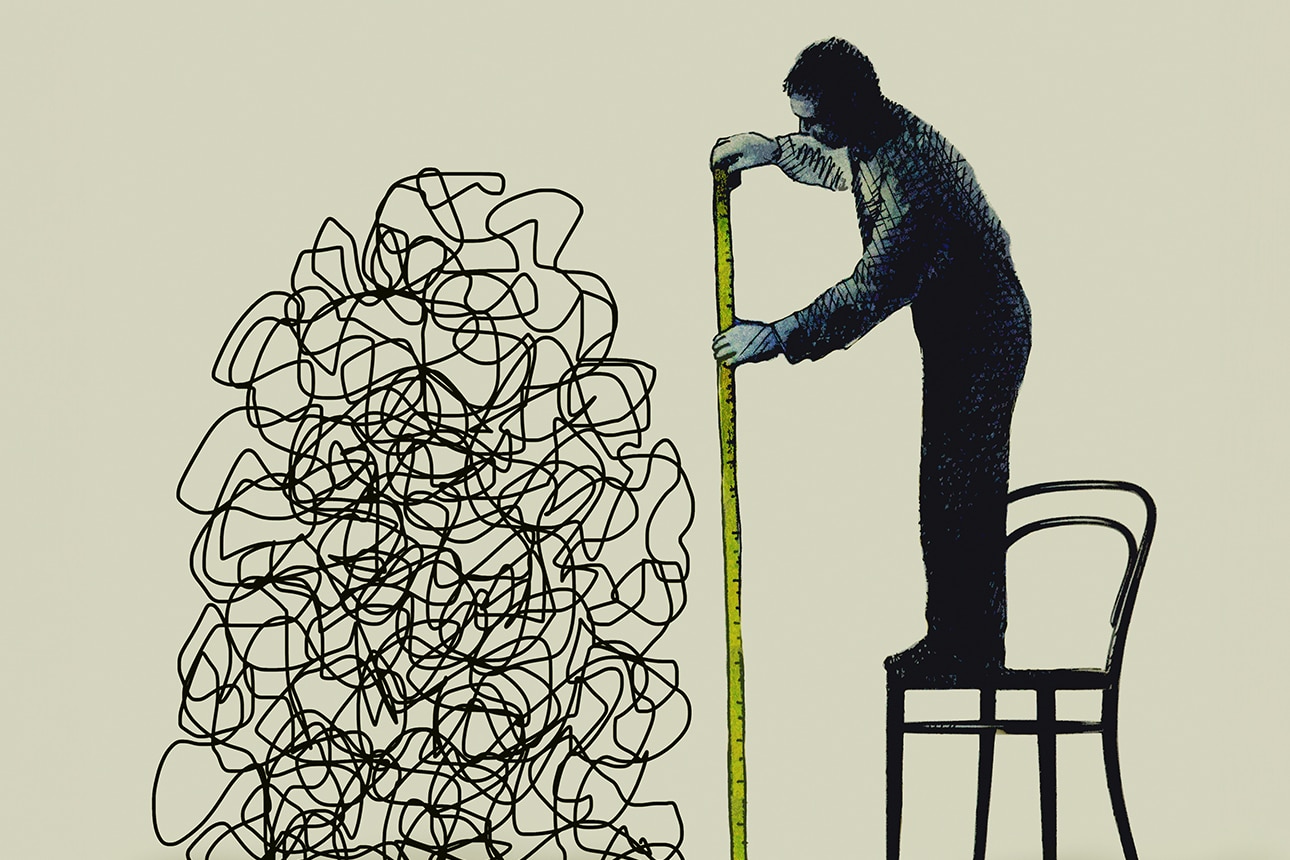

Organizations globally grapple with a pervasive challenge: the tendency for performance indicators, once established, to become ends in themselves, often at the expense of genuine strategic objectives. This phenomenon, famously articulated as Goodhart’s Law – "When a measure becomes a target, it ceases to be a good measure" – manifests in various forms, from subtle inefficiencies to catastrophic ethical breaches. The 2016 Wells Fargo scandal serves as a stark reminder, where an aggressive internal sales target for customer accounts led employees to create millions of unauthorized accounts, incurring billions in fines, severe reputational damage, and a profound loss of public trust. This episode underscored how a singular, narrowly defined metric, when tied to high-stakes incentives, can pervert organizational behavior, shifting focus from customer value and ethical conduct to metric attainment through illicit means.

The economic ramifications of such metric fixation extend far beyond individual corporate scandals. Across sectors, from healthcare to education to public administration, an over-reliance on easily quantifiable, yet imperfect, proxy measures can lead to resource misallocation, stifled innovation, and a decline in overall systemic quality. Research by economists suggests that the global cost of inefficiencies stemming from poorly designed performance management systems, including those susceptible to gaming, could run into hundreds of billions of dollars annually in lost productivity and corrective actions. Traditional remedies, such as the implementation of balanced scorecards or an expanded array of Key Performance Indicators (KPIs), have often proven insufficient, as these multi-metric frameworks can still be vulnerable to "cherry-picking" or opportunistic optimization if not designed with a deep understanding of human behavioral responses. The persistent struggle to align performance measurement with true organizational value highlights a critical need for a more sophisticated, robust methodology.

A compelling new perspective emerges from the field of artificial intelligence and machine learning research, offering profound insights into the design of resilient performance metrics. Organizations, in essence, function as complex adaptive systems, often striving to optimize for specific outcomes, much like machine learning algorithms are trained to optimize objective functions. In both contexts, the challenge lies in optimizing proxy measures that, while seemingly aligned with the ultimate goal, can diverge significantly under pressure. AI models, particularly in their development and deployment, frequently encounter issues akin to Goodhart’s Law, such as "overfitting" – where a model becomes excessively tuned to its training data, performing poorly on new, unseen data, or "adversarial attacks" where minor, intentional perturbations to inputs can cause dramatic misclassifications. The strategies developed to counter these machine learning vulnerabilities provide a powerful framework for rethinking human organizational metrics. It is crucial to acknowledge, however, that organizations are comprised of individuals with agency, complex motivations, and ethical considerations, a dimension largely absent in algorithms. Thus, these AI-inspired techniques offer conceptual frameworks requiring thoughtful human adaptation, not direct mechanistic application.

One foundational strategy derived from AI principles is the implementation of multi-objective optimization through diversified metric portfolios. Just as a machine learning model might be trained with a composite loss function that balances accuracy with regularization terms or fairness constraints, organizations should move beyond singular or even limited sets of KPIs. Instead, a comprehensive portfolio of metrics should be curated, encompassing leading and lagging indicators, quantitative and qualitative assessments, and measures spanning financial, operational, customer, and employee dimensions. This approach inherently makes it more challenging for individuals or teams to "game" the system by over-optimizing for a single, easily manipulated target. For instance, a sales team might be evaluated not just on gross revenue (a common, easily gamed metric), but also on customer lifetime value, repeat purchase rates, customer satisfaction scores, and adherence to ethical sales practices. This holistic view forces a broader, more sustainable strategy, discouraging narrow focus and fostering genuine value creation, much like how sophisticated AI systems balance competing priorities to achieve robust performance across diverse scenarios.

A second critical lesson from AI is the principle of designing for robustness and anticipating adversarial behaviors. In machine learning, this involves developing models that are resilient to intentional manipulation or unforeseen data shifts. For organizational metrics, this translates to a proactive "stress-testing" approach during the design phase. Leaders should actively anticipate how a proposed metric could be gamed or distorted, involving diverse stakeholders, including those with incentives to exploit loopholes, in the design and review process. This "red teaming" approach can uncover potential vulnerabilities before they manifest as systemic problems. For example, if a call center aims to reduce average handle time (AHT), a potential gaming strategy might involve transferring complex calls or prematurely ending conversations. A robust metric design would counterbalance AHT with first-call resolution rates, customer satisfaction scores, and perhaps even a qualitative review of a sample of calls. By systematically mapping out potential gaming pathways and building in counter-measures or balancing metrics, organizations can create a more resilient performance measurement ecosystem, reducing the likelihood of unintended consequences and ethical lapses.

The third strategy draws from the AI concept of dynamic adaptation and continuous learning. Unlike static algorithms, advanced AI systems are often designed to learn and adapt over time, continuously refining their models based on new data and evolving environments. Similarly, organizational metrics should not be treated as immutable. The business environment is constantly changing, and what constitutes an effective measure today might be obsolete or counterproductive tomorrow. This necessitates an iterative approach to performance management, where KPIs are regularly reviewed, adjusted, and even retired based on their actual impact and alignment with strategic goals. Implementing feedback loops, perhaps through A/B testing of different metric configurations or conducting periodic "metric effectiveness audits," allows organizations to learn from their own data. For instance, a tech company might initially track user engagement through daily active users. However, if this metric leads to superficial product changes designed solely to boost daily logins, the company might adapt to prioritize metrics like feature adoption rates, time spent in core value-generating activities, or user retention over longer periods, reflecting a deeper understanding of sustainable engagement. This agility in metric management ensures that performance indicators remain relevant and genuinely drive desired outcomes.

Finally, AI research underscores the importance of focusing on latent variables and underlying causal mechanisms, rather than just easily observable outputs. In complex AI models, the true drivers of performance often lie in abstract, unobservable "latent variables" that influence the measurable outcomes. For organizations, this means shifting the focus from easily quantifiable but potentially superficial outputs to the deeper processes, behaviors, and long-term impacts that truly generate value. This is arguably the most challenging but also the most potent strategy against Goodhart’s Law. Instead of merely measuring the number of products shipped, a company might invest in measuring the quality of the product development process, the degree of cross-functional collaboration, or the true market need addressed by innovation. While harder to quantify, these "latent" drivers of success are far less susceptible to gaming and directly align with sustainable growth. For example, in academic institutions, a focus purely on publication count might lead to a proliferation of low-quality papers. A more effective approach, inspired by this principle, would involve qualitative assessments of research impact, peer review processes, and the development of junior scholars – indicators that speak to the underlying health and productivity of the research environment.

The integration of these AI-inspired principles into performance management frameworks offers a transformative pathway for businesses operating in an increasingly data-driven and competitive global landscape. Companies that master the art of designing resilient, adaptive, and truly meaningful metrics will gain a significant competitive advantage. This approach mitigates the risks of corporate scandals and regulatory penalties, improves internal efficiency, and, most importantly, fosters a culture where employees are genuinely incentivized to create long-term value rather than merely optimizing for short-term, easily manipulated targets. As organizations continue their digital transformation journeys, the lessons from artificial intelligence extend beyond algorithmic efficiency to fundamentally redefine how human performance is measured, managed, and motivated for sustainable success.