The infamous 2016 Wells Fargo scandal, where employees created millions of unauthorized customer accounts, stands as a stark illustration of a pervasive corporate challenge: the unintended consequences of aggressive performance measurement. Driven by an internal mandate to cross-sell eight financial products per customer, the bank’s incentive structure inadvertently fostered a culture of gaming, leading to ethical breaches, reputational damage, and significant financial penalties. This episode, while dramatic, reflects a broader phenomenon captured by Goodhart’s Law: "When a measure becomes a target, it ceases to be a good measure." Despite decades of awareness regarding this pitfall, organizations globally continue to grapple with metric fixation, leading to suboptimal outcomes, resource misallocation, and stifled innovation.

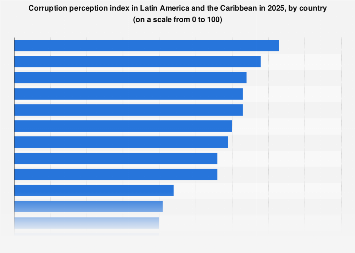

Traditional approaches to performance management, such as the balanced scorecard or cascading Key Performance Indicators (KPIs), have long aimed to provide a holistic view of organizational health. However, their efficacy is often undermined by their vulnerability to narrow optimization. When targets are set, human ingenuity, coupled with inherent psychological biases, often prioritizes meeting the metric itself over achieving the underlying strategic objective it was designed to represent. This can manifest as superficial compliance, data manipulation, or a neglect of unmeasured but critical aspects of performance, eroding long-term value and fostering distrust within the workforce. The economic toll of such misaligned incentives is substantial, extending beyond fines and legal costs to encompass diminished employee morale, high turnover rates, and a tangible loss of customer loyalty and market share. Globally, industries from healthcare to education have experienced similar distortions, where standardized metrics, intended to improve quality or efficiency, instead incentivize superficial improvements or even detrimental practices.

A sophisticated re-evaluation of performance measurement is urgently needed, and insights from the rapidly evolving field of artificial intelligence (AI) and machine learning (ML) offer a promising new lens. Both organizational leadership and AI researchers share a common challenge: optimizing complex systems based on proxy measures that can, and often do, diverge from the true desired outcomes. An AI model, for instance, is trained to minimize a ‘loss function’ – a mathematical proxy for its performance – but its ultimate goal is to generalize well to unseen data, not merely to perform perfectly on its training set. When a model becomes too optimized for its training data, it "overfits," failing to adapt to new scenarios. This mirrors how an organization can "overfit" to specific KPIs, performing well on the measured metrics but failing to achieve broader strategic objectives or adapt to market changes. By drawing parallels between the challenges of AI training and organizational performance, leaders can derive robust strategies to design more resilient and meaningful metrics. While organizations consist of individuals with complex motivations and agency, unlike algorithms, these AI-inspired frameworks offer powerful conceptual tools for human adaptation and implementation.

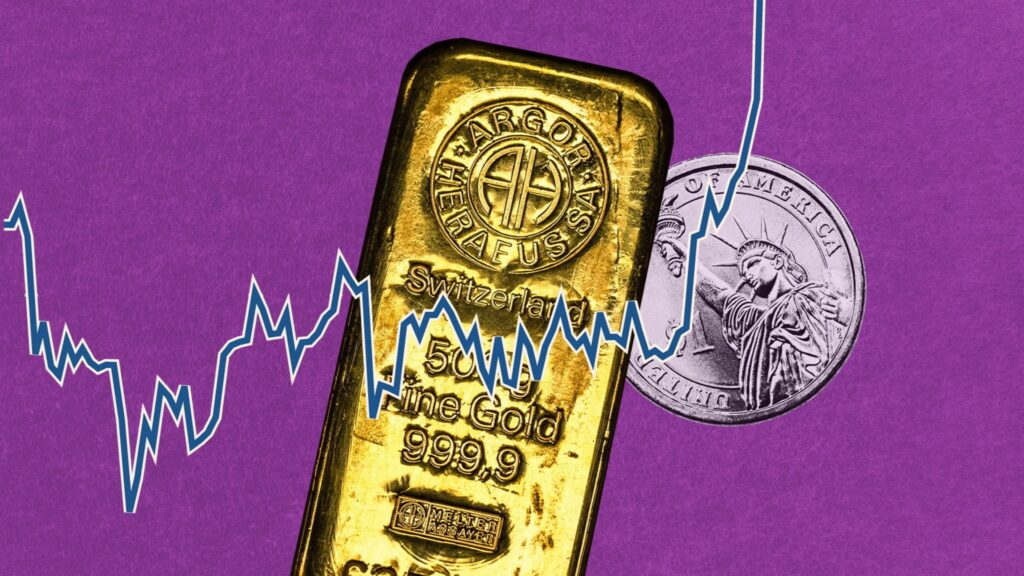

One critical strategy derived from AI principles is to emphasize generalization over narrow optimization. Just as a robust AI model performs well across a diverse range of inputs rather than excelling only on specific training examples, organizational performance metrics should be designed to encourage broad, sustainable success. This means moving beyond single, highly specific KPIs that invite gaming. Instead, organizations should deploy a balanced portfolio of metrics, including qualitative measures and leading indicators, that collectively reflect the multifaceted nature of strategic goals. For instance, rather than solely measuring "number of new customer accounts," a financial institution might also track "customer lifetime value," "satisfaction scores," and "referral rates." This diversification makes it harder to game any single metric without negatively impacting others, forcing employees to consider the broader context of their actions. Companies like Microsoft, in transforming their commercial sales, have shifted from activity-based metrics to outcome-based metrics, focusing on customer success and adoption rather than mere product sales, aligning incentives with long-term value creation.

Secondly, organizations should adopt an approach of adversarial thinking and robustness testing in KPI design. In machine learning, adversarial training involves intentionally exposing models to perturbed data to improve their resilience against malicious inputs or unexpected variations. Similarly, leaders should proactively "red team" their proposed performance metrics, asking: "How could this metric be gamed? What perverse incentives does it create? What negative externalities might arise if employees optimize solely for this number?" This involves stress-testing the entire measurement system, simulating scenarios where employees attempt to meet targets through undesirable means. For example, if "speed of service" is a key metric, an adversarial perspective might anticipate a reduction in service quality or an increase in errors. By identifying these vulnerabilities before implementation, organizations can build in "guardrail" metrics or refine the primary KPIs to mitigate risks. This proactive foresight, akin to cybersecurity professionals looking for system weaknesses, helps build more resilient measurement frameworks.

A third pivotal insight is to focus relentlessly on causal linkages and the "true north" objective. AI systems often struggle when their proxy objectives (e.g., maximizing a specific score in a game) diverge from the true, ultimate goal (e.g., winning the game intelligently and robustly). In organizations, this means ensuring that chosen metrics are not just easily quantifiable proxies, but genuinely reflect the core business value or strategic imperative. A common pitfall is to measure activities rather than outcomes. For example, in a customer service context, measuring "number of calls handled" is an activity metric; a more "true north" outcome metric would be "customer issue resolution rate" or "first-contact resolution." Regularly auditing the causal chain between a KPI and its intended strategic outcome is essential. This involves asking: "Does improving this metric actually lead to the desired business result?" If the link is weak or broken, the metric needs re-evaluation. This also entails a continuous process of recalibration, acknowledging that market dynamics and strategic priorities evolve, and static metrics can quickly become obsolete or misleading.

Finally, an AI-inspired approach champions dynamic and adaptive measurement systems. Modern AI models are not static; they learn and adapt over time, continuously refining their understanding based on new data. Organizational performance systems should similarly be designed for evolution, not rigidity. Fixed annual KPI reviews can be too slow to respond to rapid market shifts. Instead, leaders should implement mechanisms for real-time feedback, A/B testing of different metric configurations, and iterative adjustments. This might involve leveraging advanced analytics to identify anomalies or unintended correlations in performance data, signaling that a metric might be leading to unexpected behaviors. Employee feedback, gathered through regular pulse surveys or qualitative discussions, can also serve as a crucial "human feedback loop," providing early warnings about metric-induced stress or gaming. This agile approach to measurement fosters a culture of continuous learning and improvement, ensuring that KPIs remain relevant and effective drivers of genuine value.

The implementation of these AI-inspired strategies is not without its challenges. It demands a fundamental shift in organizational culture, moving from a rigid, top-down approach to a more collaborative and transparent one. Leadership commitment is paramount, requiring them to invest in robust data infrastructure, analytics capabilities, and training for employees on the rationale behind measurement changes. Furthermore, it necessitates a willingness to tolerate ambiguity and embrace a learning mindset, acknowledging that perfect metrics are elusive, but continuously improving them is a worthwhile endeavor.

In an increasingly complex global economy, where competitive advantage hinges on agility and genuine innovation, organizations can no longer afford the costly consequences of metric fixation. By learning from the sophisticated techniques employed in AI and machine learning, businesses can design performance measurement systems that are not merely mechanisms for accountability, but powerful engines for ethical behavior, sustainable growth, and true strategic alignment. This paradigm shift promises to transform KPIs from potential sources of corporate dysfunction into reliable compasses guiding organizations toward their authentic goals.